Решил запилить простенький парсер zoominfo через кэш гугла

Язык: >= python3.8

Установка: в папке со скриптом прописать pip install -r requirements.txt

requirements.txt

Запуск:

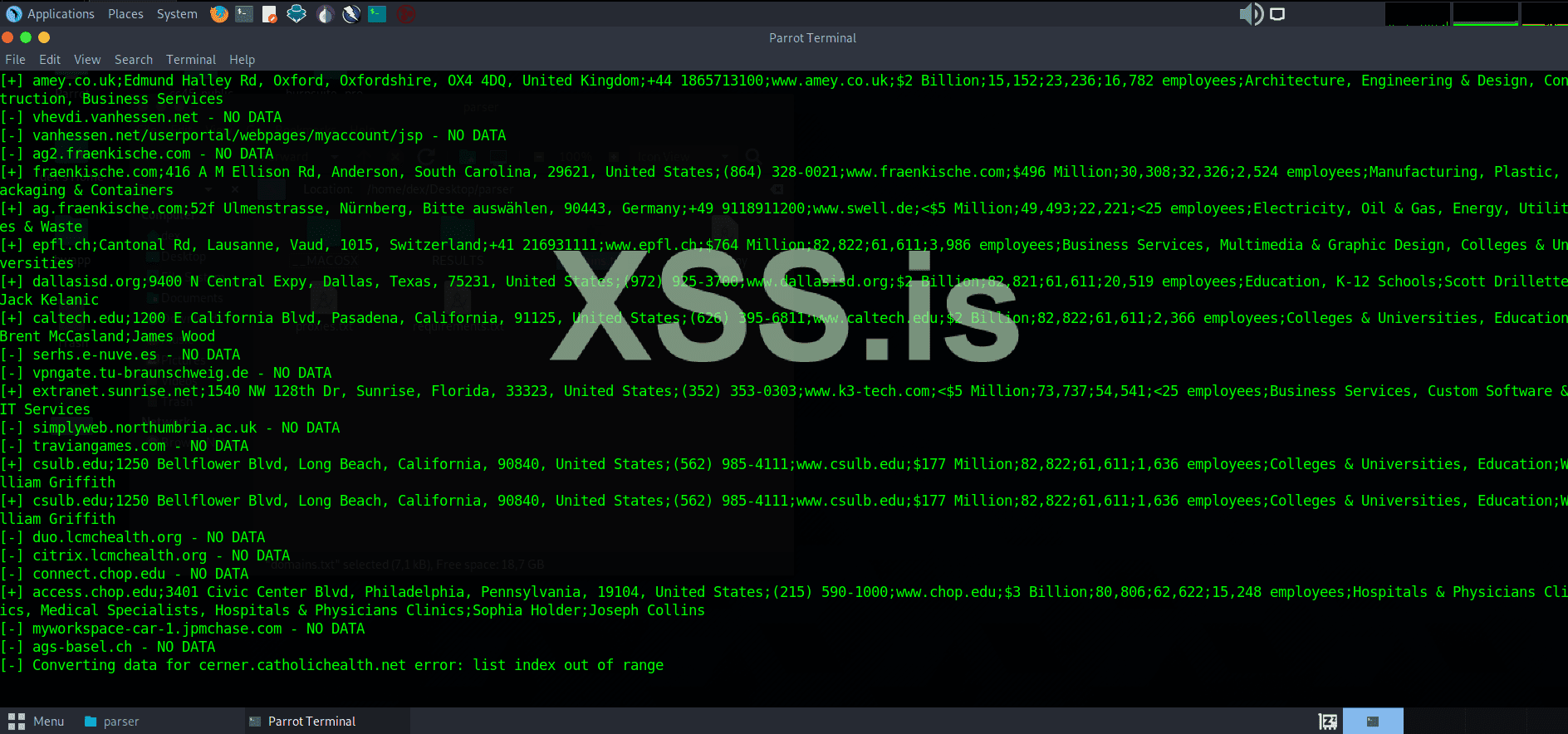

Вывод скрипта вида: domain/corp_name;headquarters;phone;symbol;website;revenue;SIC;NAICS;employees;industry;CFO;CTO

Важно:

Язык: >= python3.8

Установка: в папке со скриптом прописать pip install -r requirements.txt

requirements.txt

Код:

beautifulsoup4==4.11.1

bs4==0.0.1

certifi==2022.9.24

charset-normalizer==2.1.1

fake-useragent==0.1.11

idna==3.4

requests==2.28.1

soupsieve==2.3.2.post1

urllib3==1.26.12Запуск:

- домены ложим в domains.txt либо указываем название файла в аргументе -d

- прокси ложим в proxies.txt

- результаты в папке RESULTS

Код:

usage: main.py [-h] [-d D_FILE] [-p] [-t THREADS]

Zoominfo.com parser through Google Cache

optional arguments:

-h, --help show this help message and exit

-d D_FILE Domains file name. Default: domains.txt

-p Use socks5 proxies. Default: False

-t THREADS Threads number. Default: 1Вывод скрипта вида: domain/corp_name;headquarters;phone;symbol;website;revenue;SIC;NAICS;employees;industry;CFO;CTO

Если хотите отключить не нужные поля, то закомментируйте выборочно строки 118-128. Например:airbnb.com;888 Brannan St, San Francisco, California, 94103, United States;(207) 763-4652;ABNB;www.airbnb.com;$6 Billion;47,472;72,721;6,132 employees;Hospitality, Travel Agencies & Services, Content & Collaboration Software;Dave Stephenson;Aristotle Balogh

Python:

check_words = {

'headquarters': 'in ',

'phone': 'is ',

# 'symbol': 'is ',

'website': 'is ',

'revenue': 'is ',

# 'SIC': 'SIC: ',

# 'NAICS': 'NAICS: ',

# 'employees': 'has ',

# 'industry': 'of: ',

'CFO': 'CFO is ',

'CTO': 'CTO is ',

}

Python:

import argparse

import sys

import os

from queue import Queue

from threading import RLock

from urllib.parse import quote_plus, parse_qs, urlparse

from concurrent.futures import ThreadPoolExecutor

try:

import requests

from bs4 import BeautifulSoup

from fake_useragent import UserAgent

except ImportError as err:

print(f'[-] Import error: {err}\n\t Try to: pip install -r requirements.txt')

sys.exit()

USE_PROXY = False

LOCK = RLock()

Q_PROXIES = Queue()

PATH = os.path.dirname(__file__)

RESULTS = os.path.join(PATH, 'RESULTS')

os.makedirs(RESULTS, exist_ok=True)

class GoogleSearch:

def __init__(self, proxy=None):

self.proxies = {

'http': f'socks5://{proxy}',

'https': f'socks5://{proxy}',

} if proxy else None

self.ua = UserAgent().random

def __get_soup(self, url):

resp = requests.get(

url=url,

proxies=self.proxies,

headers={'User-Agent': self.ua},

)

return BeautifulSoup(resp.text, 'html.parser')

def search_one(self, domain):

query = quote_plus(f'site:zoominfo.com {domain}')

url = f'https://google.com/search?q={query}'

soup = self.__get_soup(url)

anchors = soup.findAll('a')

for a in anchors:

a_href = a.get('href')

if a_href and a_href.startswith('/url'):

a_parsed = urlparse(a_href, 'http')

a_url = parse_qs(a_parsed.query).get('url')

return a_url[0] if a_url else None

def parse_zoom_url(self, zoom_url):

url = f'https://webcache.googleusercontent.com/search?q=cache:{zoom_url}'

soup = self.__get_soup(url)

return [answer.text for answer in soup.findAll(class_='faq-answer')]

def safe_print(message):

with LOCK:

print(message)

def write_file(filename, data):

with LOCK:

with open(filename, 'a') as f:

f.write(f'{data}\n')

def read_file(filename):

try:

with open(filename, 'r') as f:

return [

line.rstrip()

for line in f

if line.rstrip()

]

except IOError as err:

print(f'[-] {filename} open error: {err}')

sys.exit()

def load_proxy_list(filename):

with LOCK:

if Q_PROXIES.empty():

[Q_PROXIES.put(p) for p in read_file(filename)]

def check_proxy(proxy):

try:

return True if requests.get(

url='https://google.com',

timeout=7,

proxies={

'http': f'socks5://{proxy}',

'https': f'socks5://{proxy}',

}

) else False

except:

return False

def get_proxy():

while True:

load_proxy_list()

proxy = Q_PROXIES.get()

if check_proxy(proxy):

return proxy

def answers_to_dict(answers):

check_words = {

'headquarters': 'in ',

'phone': 'is ',

'symbol': 'is ',

'website': 'is ',

'revenue': 'is ',

'SIC': 'SIC: ',

'NAICS': 'NAICS: ',

'employees': 'has ',

'industry': 'of: ',

'CFO': 'CFO is ',

'CTO': 'CTO is ',

}

answers_dict = {}

for answer in answers:

for word, sep in check_words.items():

if word in answer:

answers_dict[word] = answer.split(sep)[1]

return answers_dict

def worker(domain):

proxy = get_proxy() if USE_PROXY else None

g = GoogleSearch(proxy=proxy)

try:

zoom_url = g.search_one(domain)

except Exception as err:

safe_print(f'[-] Searching {domain} error: {err}')

write_file(f'{RESULTS}/errors_search.txt', f'{domain} - {err}')

return

try:

answers = g.parse_zoom_url(zoom_url)

except Exception as err:

safe_print(f'[-] Parsing url for {domain} error: {err}')

write_file(f'{RESULTS}/errors_parse.txt', f'{domain} - {err}')

return

try:

data = answers_to_dict(answers)

except Exception as err:

safe_print(f'[-] Converting data for {domain} error: {err}')

write_file(f'{RESULTS}/errors_convert.txt', f'{domain} - {err}')

return

if not data:

safe_print(f'[-] {domain} - NO DATA')

write_file(f'{RESULTS}/bad.txt', domain)

return

str_data = ";".join([domain, *data.values()])

safe_print(f'[+] {str_data}')

write_file(f'{RESULTS}/good.txt', str_data)

def get_args():

parser = argparse.ArgumentParser(description='Zoominfo.com parser through Google Cache')

parser.add_argument('-d', dest='d_file', default='domains.txt', help='Domains file name. Default: %(default)s')

parser.add_argument('-p', dest='use_proxy', action='store_true', help='Use socks5 proxies. Default: %(default)s')

parser.add_argument('-t', dest='threads', default=1, help='Threads number. Default: %(default)s')

return parser.parse_args()

def main():

args = get_args()

domains = [d for d in read_file(args.d_file)]

if args.use_proxy:

global USE_PROXY

USE_PROXY = True

[Q_PROXIES.put(p) for p in read_file('proxies.txt')]

print(f'[~] Loaded {Q_PROXIES.qsize()} proxies')

with ThreadPoolExecutor(max_workers=args.threads) as pool:

pool.map(worker, domains)

if __name__ == '__main__':

main()Важно:

- скрипт не тестировался на проксях (socks5) (гугл выдаст капчу на потоках, но разовые запуски пропускает)

- технически это beta-версия

- внесу любые правки от пользователей

Последнее редактирование: