is it safe to use chatgpt in tor browser when you ask simple coding questions, not obvious ones that are connected with malware development? just simple prove of concept things that you can rewrite and use?

-

XSS.stack #1 – первый литературный журнал от юзеров форума

using chatgpt ai for malware development

- Автор темы Pharos

- Дата начала

think about it and answer it mentally:

- are you using some personal account or easily linked to your identity in the real world (reusing emails, nicknames, or has not created your accounts with tor, etc)?

- what do you intend to do with this malware? only for studies or do you intend to use it in the "jungle"?

i'm very paranoid about my opsec with maldev, so what i would tell you to do is:

- don't let anyone know that you write malware: google, meta, openai, x/twitter, stackoverflow, microsoft, reddit, kaspersky, your neighbor, etc.

- change how you write questions to make them anonymous;

- always refactor the codes generated by chatgpt and other AIs (and of course, do not reuse these codes in other personal projects).

an example of what happens when you have a bad opsec when writing malware:

https://www.vice.com/en/article/uzb...ons-uncovered-due-to-spectacularly-bad-opsec/

- are you using some personal account or easily linked to your identity in the real world (reusing emails, nicknames, or has not created your accounts with tor, etc)?

- what do you intend to do with this malware? only for studies or do you intend to use it in the "jungle"?

i'm very paranoid about my opsec with maldev, so what i would tell you to do is:

- don't let anyone know that you write malware: google, meta, openai, x/twitter, stackoverflow, microsoft, reddit, kaspersky, your neighbor, etc.

- change how you write questions to make them anonymous;

- always refactor the codes generated by chatgpt and other AIs (and of course, do not reuse these codes in other personal projects).

an example of what happens when you have a bad opsec when writing malware:

https://www.vice.com/en/article/uzb...ons-uncovered-due-to-spectacularly-bad-opsec/

Mate, if you're connecting to OpenAI official website (https://) then I personally see no point in using Tor Browser. The nodes would be different and no onion-tunnelization would apply IMHO.is it safe to use chatgpt in tor browser

Use private browsing function or separate profile browser. Clear cache & cookies, use VPN and separate profiles of the OpenAI account. Should keep ya safe. Cheers.

Пожалуйста, обратите внимание, что пользователь заблокирован

Your best off learning a bit about LLM and developing your own language modules. Here is a decent video that will break it down for you.

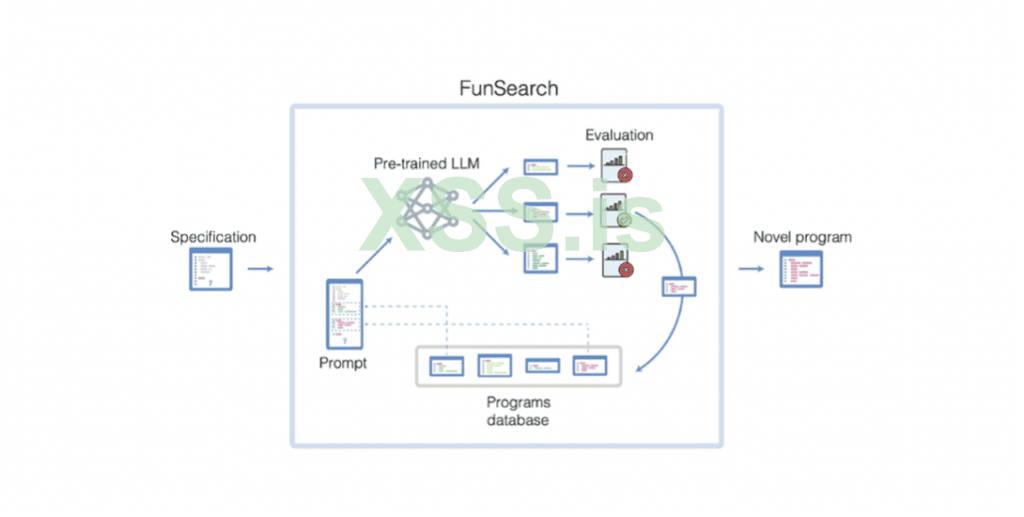

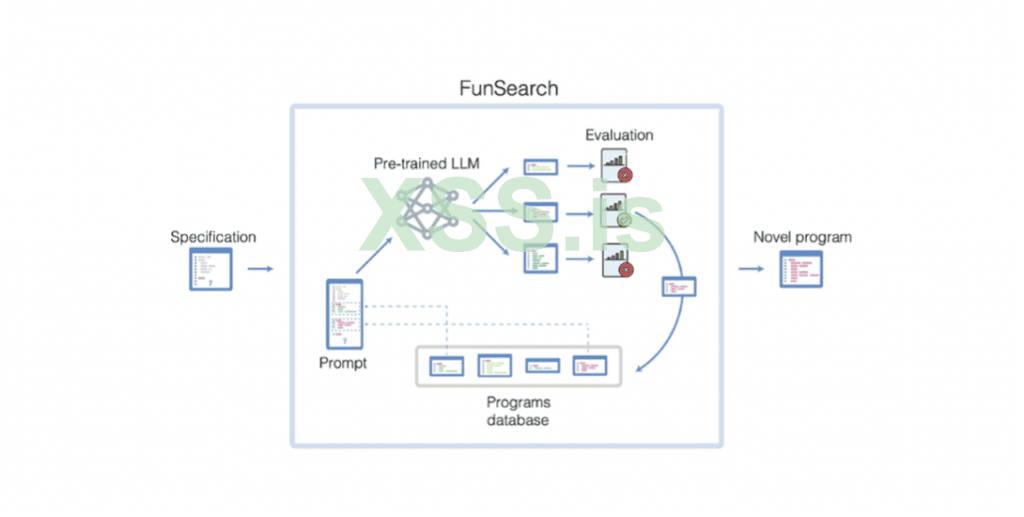

It may look alot like this

But just take it slow - get your barring's then head over to https://huggingface.co ( Its like Github - but for AI ) then ease your self in it.

Over the next 5-10 years you may want to learn this thoroughly IMO.

But Yes... AI can generate Malware.

This was made with AI

giphy.com

giphy.com

And this

giphy.com

giphy.com

and several other very good projects i have done...

It has also helped me crypt.. bypass WD.. Develop sites & Mini Apps... Telegram Bots...

Shit... Even turn a still image into animation

It may look alot like this

But just take it slow - get your barring's then head over to https://huggingface.co ( Its like Github - but for AI ) then ease your self in it.

Over the next 5-10 years you may want to learn this thoroughly IMO.

But Yes... AI can generate Malware.

This was made with AI

- Find & Share on GIPHY

Discover & share this Animated GIF by cashoutmotivated with everyone you know. GIPHY is how you search, share, discover, and create GIFs.

And this

- Find & Share on GIPHY

Discover & share this Animated GIF by cashoutmotivated with everyone you know. GIPHY is how you search, share, discover, and create GIFs.

and several other very good projects i have done...

It has also helped me crypt.. bypass WD.. Develop sites & Mini Apps... Telegram Bots...

Shit... Even turn a still image into animation

Which model do you use create such result?Your best off learning a bit about LLM and developing your own language modules. Here is a decent video that will break it down for you.

It may look alot like this

Посмотреть вложение 100017

But just take it slow - get your barring's then head over to https://huggingface.co ( Its like Github - but for AI ) then ease your self in it.

Over the next 5-10 years you may want to learn this thoroughly IMO.

But Yes... AI can generate Malware.

This was made with AI

- Find & Share on GIPHY

Discover & share this Animated GIF by cashoutmotivated with everyone you know. GIPHY is how you search, share, discover, and create GIFs.giphy.com

And this

- Find & Share on GIPHY

Discover & share this Animated GIF by cashoutmotivated with everyone you know. GIPHY is how you search, share, discover, and create GIFs.giphy.com

and several other very good projects i have done...

It has also helped me crypt.. bypass WD.. Develop sites & Mini Apps... Telegram Bots...

Shit... Even turn a still image into animation

Thats not a safe option to use chatGPT in my opinion. ChatGPT is not even that good at coding. It may make incorect or unsafe code especially if you use low level like C/C++ ASMis it safe to use chatgpt in tor browser when you ask simple coding questions, not obvious ones that are connected with malware development? just simple prove of concept things that you can rewrite and use?

dont use chatgpt for this tasks, there are many alternatives for example: gemini by google, Uncensored Ai By @d0lly_cryb4by. if you set the promt correctly, it will give you a code that will fit your criteria

How safe is Uncensored AI?dont use chatgpt for this tasks, there are many alternatives for example: gemini by google, Uncensored Ai By @d0lly_cryb4by. if you set the promt correctly, it will give you a code that will fit your criteria

its parranoid i don't think you will make malware for tacking off uranium factory ! yes you can its safe and if its not safe mostly chatgpt will not asnwer you

It is correct if you are going to use some LLM, use it locally on your machine offline like Llama Coder, DeepSeek and others, it is saferthink about it and answer it mentally:

- are you using some personal account or easily linked to your identity in the real world (reusing emails, nicknames, or has not created your accounts with tor, etc)?

- what do you intend to do with this malware? only for studies or do you intend to use it in the "jungle"?

i'm very paranoid about my opsec with maldev, so what i would tell you to do is:

- don't let anyone know that you write malware: google, meta, openai, x/twitter, stackoverflow, microsoft, reddit, kaspersky, your neighbor, etc.

- change how you write questions to make them anonymous;

- always refactor the codes generated by chatgpt and other AIs (and of course, do not reuse these codes in other personal projects).

an example of what happens when you have a bad opsec when writing malware:

https://www.vice.com/en/article/uzb...ons-uncovered-due-to-spectacularly-bad-opsec/

It would be fine if you are asking simple coding questions, even if they touch on concepts like "proof of concept". But just make sure to frame your questions in a different manner, something that would not raise any suspicion. Because if not framed in a different manner, there is something called as Behavioral Tracking. Even with Tor, your prompt patterns, typing speed, and phrasing can be used to identify or profile a user over time.

And speaking about anonymity through tor.. I'm concerned that If you're signed in, especially with a consistent OpenAI account, using Tor provides less anonymity than you might think. Because cookies and logins can easily deanonymize you.

The best alternative in my opinion is to use local LLMs. These run locally on your device, no internet connection required at all.

But in case you really want to use ChatGPT or anything like that.. Make sure to harden your OPSEC.

Use ChatGPT via a burner VPS (ex:- through Tails or Whonix) over tor or a VPN so that you're not connecting from your real device or home network. Also combine tor with temporary email services and isolated browser sessions, this setup will help you in maintaining anonymity while also getting quality responses

Hope this helps !

!

And speaking about anonymity through tor.. I'm concerned that If you're signed in, especially with a consistent OpenAI account, using Tor provides less anonymity than you might think. Because cookies and logins can easily deanonymize you.

The best alternative in my opinion is to use local LLMs. These run locally on your device, no internet connection required at all.

But in case you really want to use ChatGPT or anything like that.. Make sure to harden your OPSEC.

Use ChatGPT via a burner VPS (ex:- through Tails or Whonix) over tor or a VPN so that you're not connecting from your real device or home network. Also combine tor with temporary email services and isolated browser sessions, this setup will help you in maintaining anonymity while also getting quality responses

Hope this helps

To everyone suggesting to use LLMs locally... can you please not? You'll never get even 1% of the speed, power and depth of what you get from commercial GPTs by hosting your hobby AI on your 1337 h4x0r gamer rig. If you're doing it for shits and giggles, sure, but it'll never be something you can actually use in production, to write real code or at least something that helps you conceptualize solutions and research new attack vectors.

As to OPs question, is it safe? Depends how obvious you get with it, and then let's not forget that pentesting and redteaming is not inherently illegal. Intent is what matters most and besides, many of the malware development techniques out there are already discussed and written about publicly every day for research purposes. Those with 2 firing neurons will get the hint on how they should approach this problem.

And for the highly paranoid... VPS and paying with virtual debit card under a different name still works. Wild, I know.

As to OPs question, is it safe? Depends how obvious you get with it, and then let's not forget that pentesting and redteaming is not inherently illegal. Intent is what matters most and besides, many of the malware development techniques out there are already discussed and written about publicly every day for research purposes. Those with 2 firing neurons will get the hint on how they should approach this problem.

And for the highly paranoid... VPS and paying with virtual debit card under a different name still works. Wild, I know.