Can anyone recommend fast way or software to extract leads for URL (domain)?

-

XSS.stack #1 – первый литературный журнал от юзеров форума

прочее Url Extrator

- Автор темы seal

- Дата начала

what kind of solution do you want? Extension? Script? Executable? for Windows or Linux? What is your use case like where are you planning to grab links from? SERP and DOM sites are a bit different for example.

- Автор темы

- Добавить закладку

- #3

I want anything that scan throught my URL website and extract the emails on them like an email extractor but this should be fast very fast

Maybe

Код:

curl -s http://example.com | grep -E -o "[a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+\.[a-zA-Z]{2,}"If you want to use concurrency you may need to use proxy also to avoid rate limits.

chromewebstore.google.com

chromewebstore.google.com

Link Extractor V2 - Chrome Web Store

Extracts all links from a page and copies them to the clipboard.

The only way curl would probably be effective is if you used something like v8 engine and manually included header, xforwardfor, -a but I don't really work with leads so maybe someone can say betterMaybe

Код:curl -s http://example.com | grep -E -o "[a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+\.[a-zA-Z]{2,}"

Python:

import re

email_pattern = r'[a-zA-Z0-9_.+-]+@[a-zA-Z0-9-]+\.[a-zA-Z0-9-.]+'

with open('input.txt', 'r') as infile:

content = infile.read()

emails = re.findall(email_pattern, content)

with open('xss.txt', 'w') as outfile:

for email in emails:

outfile.write(email + '\n')

print("Emails extracted and saved to xss.txt")another reason to love python

Код:

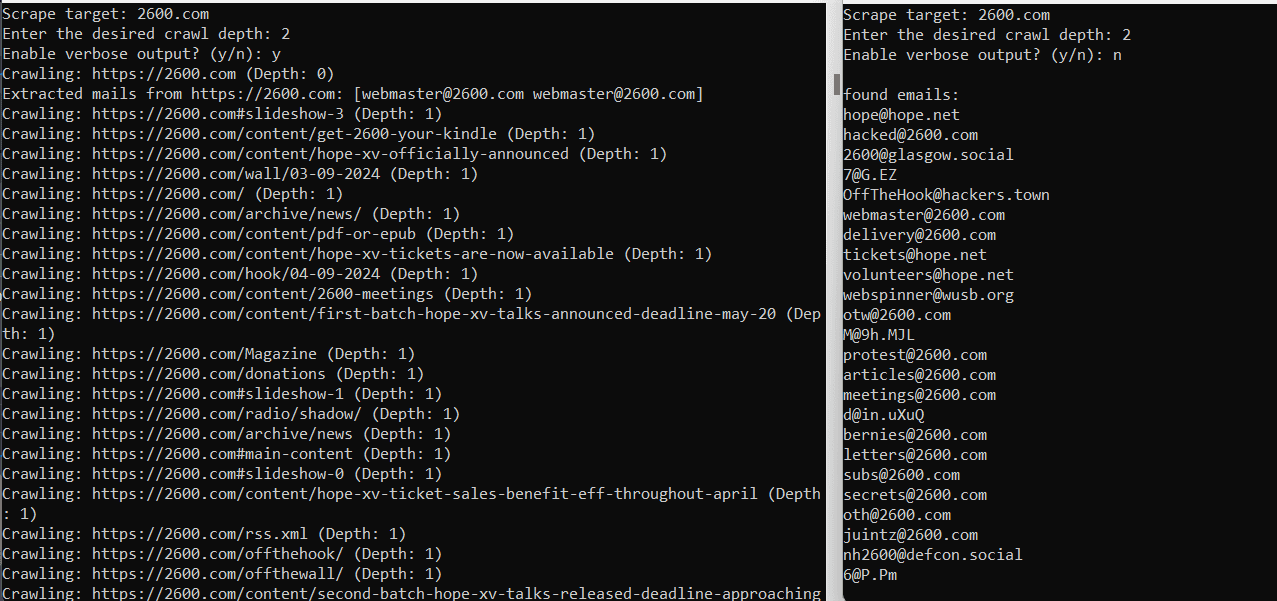

package main

import (

"bufio"

"fmt"

"net/url"

"os"

"os/exec"

"regexp"

"strings"

"sync"

"time"

"github.com/PuerkitoBio/goquery"

"github.com/dop251/goja"

)

var (

emailRegex = regexp.MustCompile(`\b[A-Za-z0-9._%+-]+@[A-Za-z0-9.-]+\.[A-Z|a-z]{2,}\b`)

visitedURLs = make(map[string]bool)

emailSet = make(map[string]string)

mutex = sync.Mutex{}

maxRetries = 3

crawlDelay = 2 * time.Second

targetDomain string

depthLimit int

excludeExt = []string{".jpg", ".jpeg", ".png", ".gif", ".pdf"}

verbose bool

)

func main() {

reader := bufio.NewReader(os.Stdin)

fmt.Print("Scrape target: ")

input, _ := reader.ReadString('\n')

targetDomain = strings.TrimSpace(input)

if targetDomain == "" {

fmt.Println("Invalid...provide a valid domain!")

return

}

fmt.Print("Enter the desired crawl depth: ")

input, _ = reader.ReadString('\n')

_, err := fmt.Sscan(input, &depthLimit)

if err != nil || depthLimit < 0 {

fmt.Println("Invalid depth. Please provide a non-negative integer.")

return

}

fmt.Print("Enable verbose output? (y/n): ")

input, _ = reader.ReadString('\n')

verbose = strings.TrimSpace(strings.ToLower(input)) == "y"

targetURL := "https://" + targetDomain

crawl(targetURL, 0)

time.Sleep(5 * time.Second)

printEmails()

}

func crawl(targetURL string, depth int) {

if depth > depthLimit {

return

}

mutex.Lock()

if visitedURLs[targetURL] {

mutex.Unlock()

return

}

visitedURLs[targetURL] = true

mutex.Unlock()

if verbose {

fmt.Printf("Crawling: %s (Depth: %d)\n", targetURL, depth)

}

htmlContent, err := fetchPageWithCurl(targetURL)

if err != nil {

if verbose {

fmt.Printf("Error fetching %s: %v\n", targetURL, err)

}

return

}

if requiresJavaScriptExecution(htmlContent) {

htmlContent, err = executeJavaScript(htmlContent)

if err != nil {

if verbose {

fmt.Printf("Error executing js on %s: %v\n", targetURL, err)

}

return

}

}

extractEmails(htmlContent, targetURL)

links := findLinks(htmlContent, targetURL)

var wg sync.WaitGroup

for _, link := range links {

wg.Add(1)

go func(link string) {

defer wg.Done()

time.Sleep(crawlDelay)

crawl(link, depth+1)

}(link)

}

wg.Wait()

}

func fetchPageWithCurl(targetURL string) (string, error) {

var output []byte

var err error

for i := 0; i < maxRetries; i++ {

cmd := exec.Command("curl", "-s", "-A", "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/85.0.4183.102 Safari/537.36", targetURL)

output, err = cmd.Output()

if err == nil {

break

}

if verbose {

fmt.Printf("Retryng fetch for %s (%d/%d)...\n", targetURL, i+1, maxRetries)

}

time.Sleep(2 * time.Second)

}

if err != nil {

return "", err

}

return string(output), nil

}

func requiresJavaScriptExecution(html string) bool {

return strings.Contains(html, "data-cfemail") || strings.Contains(html, "show-email-button")

}

func executeJavaScript(html string) (string, error) {

vm := goja.New()

_, err := vm.RunString(`

function decodeCloudflareEmail(encodedEmail) {

const r = parseInt(encodedEmail.substr(0, 2), 16);

let email = '';

for (let n = 2; n < encodedEmail.length; n += 2) {

const charCode = parseInt(encodedEmail.substr(n, 2), 16) ^ r;

email += String.fromCharCode(charCode);

}

return email;

}

const domParser = new DOMParser();

const doc = domParser.parseFromString(` + "`" + html + "`" + `, "text/html");

const obfuscatedElements = doc.querySelectorAll('[data-cfemail]');

obfuscatedElements.forEach(element => {

const encodedEmail = element.getAttribute('data-cfemail');

const decodedEmail = decodeCloudflareEmail(encodedEmail);

element.textContent = decodedEmail;

});

doc.documentElement.outerHTML;

`)

if err != nil {

return "", fmt.Errorf("Js execution error: %v", err)

}

processedHTML, err := vm.RunString("document.documentElement.outerHTML")

if err != nil {

return "", fmt.Errorf("Error getting processed Html: %v", err)

}

return processedHTML.String(), nil

}

func extractEmails(content, sourceURL string) {

emails := emailRegex.FindAllString(content, -1)

mutex.Lock()

for _, email := range emails {

if _, found := emailSet[email]; !found {

emailSet[email] = sourceURL

}

}

mutex.Unlock()

if verbose {

fmt.Printf("Extracted mails from %s: %v\n", sourceURL, emails)

}

}

func findLinks(content, baseURL string) []string {

var links []string

doc, err := goquery.NewDocumentFromReader(strings.NewReader(content))

if err != nil {

if verbose {

fmt.Printf("Error parsing html: %v\n", err)

}

return links

}

doc.Find("a").Each(func(i int, s *goquery.Selection) {

href, exists := s.Attr("href")

if exists {

absoluteURL := resolveURL(href, baseURL)

if isValidLink(absoluteURL) {

links = append(links, absoluteURL)

}

}

})

return links

}

func resolveURL(href, base string) string {

u, err := url.Parse(href)

if err != nil {

return ""

}

baseU, err := url.Parse(base)

if err != nil {

return ""

}

return baseU.ResolveReference(u).String()

}

func isValidLink(link string) bool {

parsedURL, err := url.Parse(link)

if err != nil {

return false

}

isValid := (parsedURL.Scheme == "http" || parsedURL.Scheme == "https") &&

parsedURL.Hostname() == targetDomain &&

!hasExcludedExtension(parsedURL.Path)

return isValid

}

func hasExcludedExtension(path string) bool {

for _, ext := range excludeExt {

if strings.HasSuffix(strings.ToLower(path), ext) {

return true

}

}

return false

}

func printEmails() {

if verbose {

fmt.Println("\nExtracted emails:")

for email, sourceURL := range emailSet {

fmt.Printf("%s (found on: %s)\n", email, sourceURL)

}

} else {

fmt.Println("\nfound emails:")

for email := range emailSet {

fmt.Println(email)

}

}

}

Последнее редактирование:

Код:package main import ( "bufio" "fmt" "net/url" "os" "os/exec" "regexp" "strings" "sync" "time" "github.com/PuerkitoBio/goquery" "github.com/dop251/goja" ) var ( emailRegex = regexp.MustCompile(`\b[A-Za-z0-9._%+-]+@[A-Za-z0-9.-]+\.[A-Z|a-z]{2,}\b`) visitedURLs = make(map[string]bool) emailSet = make(map[string]string) mutex = sync.Mutex{} maxRetries = 3 crawlDelay = 2 * time.Second targetDomain string depthLimit int excludeExt = []string{".jpg", ".jpeg", ".png", ".gif", ".pdf"} verbose bool ) func main() { reader := bufio.NewReader(os.Stdin) fmt.Print("Scrape target: ") input, _ := reader.ReadString('\n') targetDomain = strings.TrimSpace(input) if targetDomain == "" { fmt.Println("Invalid...provide a valid domain!") return } fmt.Print("Enter the desired crawl depth: ") input, _ = reader.ReadString('\n') _, err := fmt.Sscan(input, &depthLimit) if err != nil || depthLimit < 0 { fmt.Println("Invalid depth. Please provide a non-negative integer.") return } fmt.Print("Enable verbose output? (y/n): ") input, _ = reader.ReadString('\n') verbose = strings.TrimSpace(strings.ToLower(input)) == "y" targetURL := "https://" + targetDomain crawl(targetURL, 0) time.Sleep(5 * time.Second) printEmails() } func crawl(targetURL string, depth int) { if depth > depthLimit { return } mutex.Lock() if visitedURLs[targetURL] { mutex.Unlock() return } visitedURLs[targetURL] = true mutex.Unlock() if verbose { fmt.Printf("Crawling: %s (Depth: %d)\n", targetURL, depth) } htmlContent, err := fetchPageWithCurl(targetURL) if err != nil { if verbose { fmt.Printf("Error fetching %s: %v\n", targetURL, err) } return } if requiresJavaScriptExecution(htmlContent) { htmlContent, err = executeJavaScript(htmlContent) if err != nil { if verbose { fmt.Printf("Error executing js on %s: %v\n", targetURL, err) } return } } extractEmails(htmlContent, targetURL) links := findLinks(htmlContent, targetURL) var wg sync.WaitGroup for _, link := range links { wg.Add(1) go func(link string) { defer wg.Done() time.Sleep(crawlDelay) crawl(link, depth+1) }(link) } wg.Wait() } func fetchPageWithCurl(targetURL string) (string, error) { var output []byte var err error for i := 0; i < maxRetries; i++ { cmd := exec.Command("curl", "-s", "-A", "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/85.0.4183.102 Safari/537.36", targetURL) output, err = cmd.Output() if err == nil { break } if verbose { fmt.Printf("Retryng fetch for %s (%d/%d)...\n", targetURL, i+1, maxRetries) } time.Sleep(2 * time.Second) } if err != nil { return "", err } return string(output), nil } func requiresJavaScriptExecution(html string) bool { return strings.Contains(html, "data-cfemail") || strings.Contains(html, "show-email-button") } func executeJavaScript(html string) (string, error) { vm := goja.New() _, err := vm.RunString(` function decodeCloudflareEmail(encodedEmail) { const r = parseInt(encodedEmail.substr(0, 2), 16); let email = ''; for (let n = 2; n < encodedEmail.length; n += 2) { const charCode = parseInt(encodedEmail.substr(n, 2), 16) ^ r; email += String.fromCharCode(charCode); } return email; } const domParser = new DOMParser(); const doc = domParser.parseFromString(` + "`" + html + "`" + `, "text/html"); const obfuscatedElements = doc.querySelectorAll('[data-cfemail]'); obfuscatedElements.forEach(element => { const encodedEmail = element.getAttribute('data-cfemail'); const decodedEmail = decodeCloudflareEmail(encodedEmail); element.textContent = decodedEmail; }); doc.documentElement.outerHTML; `) if err != nil { return "", fmt.Errorf("Js execution error: %v", err) } processedHTML, err := vm.RunString("document.documentElement.outerHTML") if err != nil { return "", fmt.Errorf("Error getting processed Html: %v", err) } return processedHTML.String(), nil } func extractEmails(content, sourceURL string) { emails := emailRegex.FindAllString(content, -1) mutex.Lock() for _, email := range emails { if _, found := emailSet[email]; !found { emailSet[email] = sourceURL } } mutex.Unlock() if verbose { fmt.Printf("Extracted mails from %s: %v\n", sourceURL, emails) } } func findLinks(content, baseURL string) []string { var links []string doc, err := goquery.NewDocumentFromReader(strings.NewReader(content)) if err != nil { if verbose { fmt.Printf("Error parsing html: %v\n", err) } return links } doc.Find("a").Each(func(i int, s *goquery.Selection) { href, exists := s.Attr("href") if exists { absoluteURL := resolveURL(href, baseURL) if isValidLink(absoluteURL) { links = append(links, absoluteURL) } } }) return links } func resolveURL(href, base string) string { u, err := url.Parse(href) if err != nil { return "" } baseU, err := url.Parse(base) if err != nil { return "" } return baseU.ResolveReference(u).String() } func isValidLink(link string) bool { parsedURL, err := url.Parse(link) if err != nil { return false } isValid := (parsedURL.Scheme == "http" || parsedURL.Scheme == "https") && parsedURL.Hostname() == targetDomain && !hasExcludedExtension(parsedURL.Path) return isValid } func hasExcludedExtension(path string) bool { for _, ext := range excludeExt { if strings.HasSuffix(strings.ToLower(path), ext) { return true } } return false } func printEmails() { if verbose { fmt.Println("\nExtracted emails:") for email, sourceURL := range emailSet { fmt.Printf("%s (found on: %s)\n", email, sourceURL) } } else { fmt.Println("\nfound emails:") for email := range emailSet { fmt.Println(email) } } }

Why not to love perl?

Python:import re email_pattern = r'[a-zA-Z0-9_.+-]+@[a-zA-Z0-9-]+\.[a-zA-Z0-9-.]+' with open('input.txt', 'r') as infile: content = infile.read() emails = re.findall(email_pattern, content) with open('xss.txt', 'w') as outfile: for email in emails: outfile.write(email + '\n') print("Emails extracted and saved to xss.txt")

another reason to love python

Код:

open my $in, '<', 'input.txt';

open my $out, '>', 'xss.txt';

print $out "$_\n" while <$in> =~ /[a-zA-Z0-9_.+-]+@[a-zA-Z0-9-]+\.[a-zA-Z0-9-.]+/g;- Автор темы

- Добавить закладку

- #11

bro pls there is a reason i post it dont trash my thread if you cant do it leaveWhy not to love perl?

Код:open my $in, '<', 'input.txt'; open my $out, '>', 'xss.txt'; print $out "$_\n" while <$in> =~ /[a-zA-Z0-9_.+-]+@[a-zA-Z0-9-]+\.[a-zA-Z0-9-.]+/g;