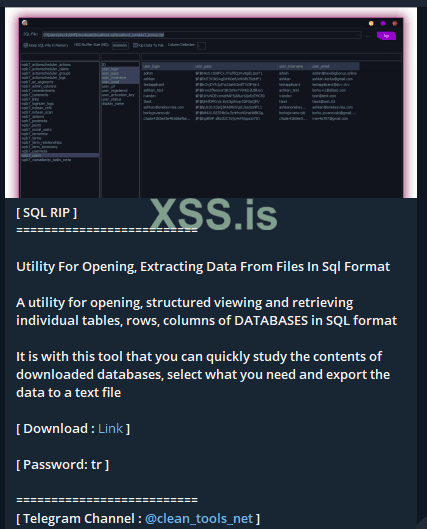

I want to convert a Mysql file I dumped from a database access to an Excel file so that it can be readable in a Tables, Rows & Columns format.

Please anyone mind telling me how I can do this without the need of connecting to the access.

Please anyone mind telling me how I can do this without the need of connecting to the access.

Just the dumped file.